Desktop Is Back: How LLMs Are Rewriting the Logic of Computing Hardware

In a world where mobile traffic dominates, the most advanced computing tool of our time is pulling people back to their desks.

1. A Number That Shouldn’t Exist

Start with the data. According to Similarweb figures from August 2025 through February 2026, 71.74% of ChatGPT’s traffic comes from desktop, with mobile accounting for just 28.26%. Claude’s desktop share is even higher — its user base skews heavily toward developers and enterprise users, with Similarweb ranking “programming and developer software” as the top audience interest, overlapping closely with GitHub, Stack Overflow, and Notion. These aren’t two isolated data points. They’re the same signal: the heaviest AI users — people who face zero technical barriers to using their phones — are choosing to sit back down at a desk.

Put in context, this number looks strange. Mobile has long accounted for nearly 60% of global web traffic. People unlock their phones more than fifty times a day. By any conventional logic, the most important new computing tool of this era should have exploded on mobile first. It didn’t.

The hardware data independently confirms the same direction. According to Gartner’s January 2026 report, global PC shipments exceeded 270 million units in 2025, up 9.1% year-over-year — the strongest annual growth in recent memory. AI PC penetration jumped from 15% in 2024 to 31% in 2025. Gartner projects it will exceed 55% in 2026; IDC’s long-range forecast puts it near 93% by 2028. This is an exponential curve, not a linear recovery.

2. Two Axes: The Real Logic Behind Computing’s Evolution

To understand the Desktop’s return, you need to think clearly about one thing: what axis have computing devices actually been evolving along — from mainframes to PCs to smartphones to LLMs?

A common misreading frames this history as a “cognitive → action → cognitive” cycle. But that framing has a fundamental flaw: mainframes and PCs were both cognitive tools — financial analysis, document processing, data modeling. The cognitive demands are continuous with what we use LLMs for today, not discontinuous.

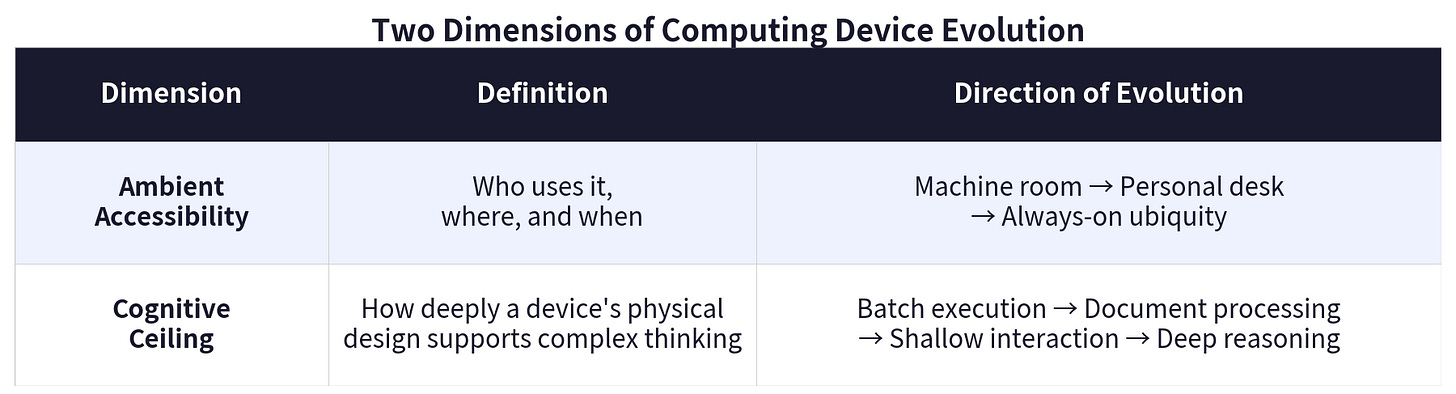

The real axes are two independent dimensions, evolving separately, combining differently in each era:

Dimension 1: Ambient Accessibility — who can use it, where, and when. From the mainframe’s machine-room exclusivity, to the PC’s desk-bound personal access, to the smartphone’s always-on ubiquity.

Dimension 2: Cognitive Ceiling — how deeply a device’s physical design can support complex thinking tasks. This isn’t about raw power getting better over time — it’s about tradeoffs. Every time a new device took over, it gave up some cognitive depth in exchange for something else.

Seen this way, history has never advanced both dimensions simultaneously. Every leap forward on one axis came at the cost of the other.

3. Four Transitions, One Logic

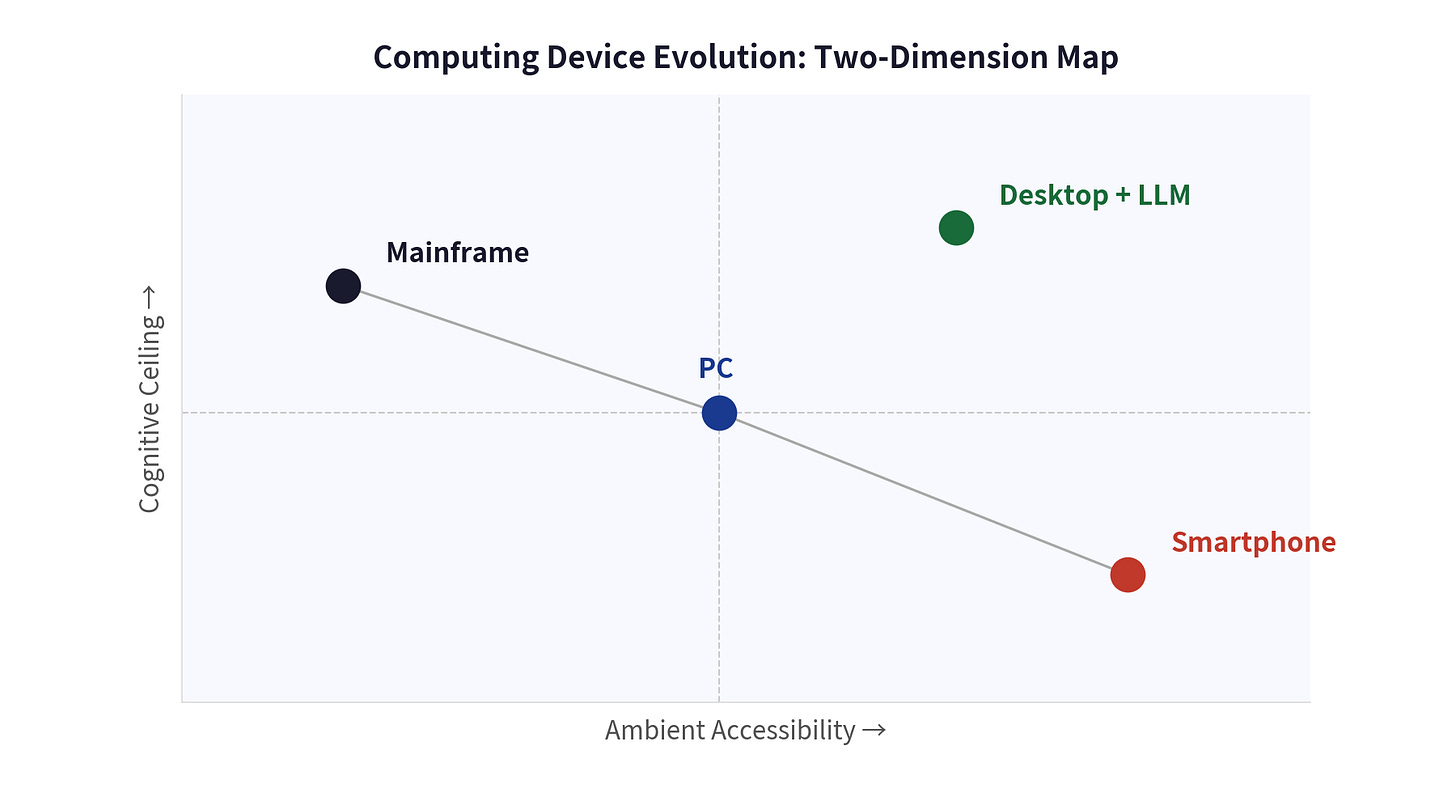

Place these two dimensions against history, and every major platform shift has a clean explanation: device design never wins. Need does. What wins is always the device that best fits the most critical dimension of its moment.

Mainframes: Maximum cognitive depth, zero ambient accessibility

In the 1950s, an IBM mainframe occupied an entire room, cost millions of dollars, and required a dedicated team of operators. Users submitted “batch jobs” to operators and waited hours for printed results. Its cognitive capabilities — payroll processing, census tabulation, ballistic calculation — were unmatched. But its users were never individuals. They were institutions. Computing was an administrative resource, not a personal tool. Ambient accessibility: effectively zero.

PCs: Accessibility unlocked — cognitive depth constrained relative to contemporary mainframes, but delivered to individuals for the first time

In 1977, the Apple II launched at $1,298. In 1979, VisiCalc — the first spreadsheet — let accountants complete in seconds what had taken hours by hand. WordStar let writers revise without retyping. The PC’s significance wasn’t that it outperformed mainframes — it didn’t. Its significance was that it put computing power in individual hands for the first time.

The PC era was the first great leap in ambient accessibility: from institutional to personal. It traded cognitive depth against contemporary mainframes — a 1990s high-end mainframe could run complex batch operations a contemporary PC couldn’t. But that tradeoff was right: it made computing a tool for ordinary people. Computing moved from the machine room to the desk. From privilege to utility. By the mid-1990s, global PC shipments had surpassed 100 million units a year.

Smartphones: Ambient accessibility maximized — cognitive depth constrained by design

On January 9, 2007, Steve Jobs took the stage: “Today, we’re going to reinvent the phone.” The smartphone’s core proposition: software is an extension of the body. Hardware is part of the skin. Minimal configuration, because making you think about settings is an offense. Always available in three seconds, because the goal is to make you forget the system exists.

This philosophy pushed ambient accessibility to its human limit: two billion people, supercomputers in their pockets, available at any moment. But achieving this required systematically compressing everything cognitive depth depends on — single-window interfaces eliminated parallel information processing; fragmented attention patterns destroyed context maintenance; touch-first input constrained long-form text entry.

Nokia held over 40% market share in 2007 and had nearly disappeared within two years — not because a better phone beat it, but because someone redefined what a phone was.

Desktop + LLM: Cognitive depth surges — ambient accessibility retreats to the desk

By 2023, PC shipments had fallen more than 25% from their peak — a casualty of the smartphone era. Then something unexpected happened.

In November 2022, ChatGPT launched. One million users in five days. One hundred million monthly active users in two months. But what matters isn’t the growth rate — it’s where people are using it. 71.74% from desktop.

The cognitive depth LLMs bring is genuinely unprecedented: reading an entire contract and identifying risk clauses; understanding the architectural logic of a complex codebase. Maintaining a full reasoning context across dozens of turns; simultaneously processing multiple interrelated information sources. But the environment it requires pulls users back to a desk: long text demands a keyboard; extended analysis demands a large screen; parallel tasks demand multiple windows.

4. The Smartphone’s Structural Contradiction — and the Next Hardware Leap

A visible tension now exists: cognitive computing demands “sit down and think deeply,” while the smartphone demands “respond instantly, anywhere.” These are mutually exclusive design requirements. Before LLMs, this tension was nearly invisible. LLMs made the invisible visible.

The smartphone’s success on the ambient accessibility dimension is itself the structural constraint on its cognitive ceiling. This isn’t an execution problem for any particular manufacturer. It’s a judgment about the design itself:

Before LLMs, this tradeoff was reasonable. But when software’s cognitive depth requirements exceed what the smartphone’s design can support, software starts forcing hardware’s hand. Every time this mismatch has appeared in history, device designs have been rebuilt: mainframes couldn’t serve individuals, so PCs appeared; PCs couldn’t serve mobile needs, so smartphones appeared; smartphones constrain cognitive depth, and the next device is being forced into existence.

This restructuring has the same shape as the last one: the new device won’t be “a better smartphone.” It will be a new species — pushing cognitive depth back to Desktop + LLM levels without retreating on ambient accessibility.

Feature phones didn’t disappear — people still use them. Smartphones won’t disappear either. But once the new species arrives, it will hold a decisive advantage in cognitive depth use cases.

Desktop is the transitional solution. It’s the closest existing design to what cognitive depth demands, but it still requires a fixed location — it hasn’t resolved the underlying tension. Its return is a signal, not a destination.

Data SourcesPC shipments and AI PC forecasts: Gartner Worldwide PC Tracker (October 2025 and January 2026); IDC Worldwide Quarterly Personal Computing Device Tracker (2025). AI platform traffic data: Similarweb (August 2025–February 2026). ChatGPT launch data: OpenAI announcements and press reports (November 2022–January 2023). Historical computing data: public historical sources. Charts by the author.