Google’s Bid to Become the “Brain” of Robotics The Platform Ambition and Competitive Stakes Behind Gemini Robotics-ER 1.6

On April 14, 2026, Google DeepMind published a brief technical announcement on its official blog: Gemini Robotics-ER 1.6 was live and immediately accessible to developers via the Gemini API and Google AI Studio. Paired with a joint statement from Boston Dynamics the same day, the release was swiftly framed by IEEE Spectrum, SiliconANGLE, and other industry outlets as a landmark moment in Physical AI. To read it merely as an incremental upgrade, however, would be to badly underestimate the strategic intent behind it.

The most revealing detail is a single performance figure: on the Instrument Reading task, ER 1.6 vaults from ER 1.5’s 23% to a peak of 93% (with Agentic Vision enabled). That 70-percentage-point gap is not simply a linear gain in precision — it signals that Google is systematically embedding large-language-model reasoning into the cognitive core of robot hardware, anchored by concrete industrial use cases, as the foundation for a much larger platform play.

I. From 23% to 93%: The Architectural Logic Behind a Precision Leap

To grasp the technical significance of ER 1.6, one must first understand the Dual-Model Architecture Google DeepMind has designed for Gemini Robotics. The ER (Embodied Reasoning) model acts as the high-level strategic planner, interpreting open-ended task goals, reading complex environments, and judging task completion. The VLA (Vision-Language-Action Model) serves as the motor cortex, translating strategic decisions into physical actuator commands. Together they embody a “slow thinking + fast execution” cognitive division of labor — a structural analogy to the prefrontal cortex planning and spinal-reflex layers of the human nervous system.

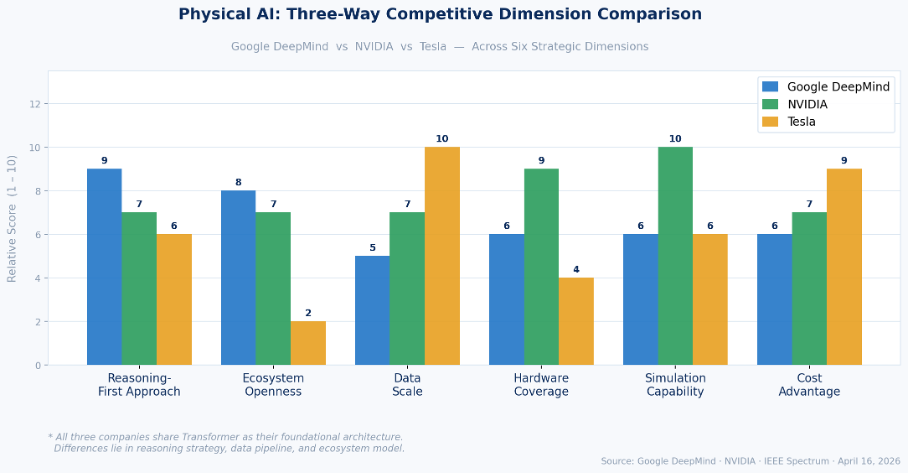

ER 1.6’s core breakthrough is its “reasoning-first” philosophy. All three major competitors share Transformer as their foundational architecture — that is the common ground of modern AI. The real divergence lies in reasoning strategy and data pipeline: NVIDIA’s Isaac GR00T N1.6 uses a Cosmos-Reason VLM plus a 32-layer Diffusion Transformer, emphasizing large-scale simulated data (Sim-to-Real Transfer) for generalized manipulation; Tesla’s Transformer models leverage the vast real-world driving data accumulated through FSD, betting on data scale alone. DeepMind’s path is different: rather than memorizing the appearance of more objects, teach the model to truly understand the logic of the physical world. According to TechBuzz.ai, this enables Spot — when confronting a closed door — to assess whether it is locked, estimate its weight, consider alternative routes, and adjust its approach strategy contextually.

Instrument reading is the clearest proof of that philosophy. Industrial gauges — pressure meters, thermometers, chemical sight glasses — are centuries-old information infrastructure not built for machine perception: needles, tick marks, and finely etched numerals set against environments plagued by glare, reflection, or grime. ER 1.6 deploys Agentic Vision, fusing visual reasoning with code execution to let the model dynamically adjust its observation strategy rather than rely on static, single-viewpoint classification — the root cause of ER 1.5’s 23% failure rate on the same task. The base ER 1.6 model already reaches 86%; enabling Agentic Vision pushes that to 93%.

Multi-View Success Detection is equally significant. Before this capability, robots could barely judge autonomously whether a task was truly complete — especially in occluded, dim, or dynamic environments — forcing industrial deployments to retain extensive human oversight. By fusing data from top-down and wrist-mounted cameras, ER 1.6 allows Spot to decide independently whether to retry or advance without human intervention. According to DeepMind researchers Laura Graesser and Peng Xu, this capability is the essential prerequisite for moving industrial autonomy from “scripted demonstrations” to genuine deployment.

II. Boston Dynamics’ Fateful Loop: Why Google Had to Reclaim What It Once Sold

Google acquired Boston Dynamics in 2013 during Andy Rubin’s robotics expansion era. Yet fewer than four years later, Alphabet sold it to SoftBank in 2017 at an undisclosed price. The stated reason: Boston Dynamics relied heavily on DARPA military contracts, and Alphabet explicitly refused to become a defense contractor. The deeper reason: during the cost-cutting period led by CFO Ruth Porat, Alphabet grew impatient with projects unlikely to generate near-term revenue.

That decision has since been widely regarded as one of Google’s costliest strategic missteps. In 2020, Hyundai Motor Group acquired an 80% stake in Boston Dynamics for approximately $880 million (implying a $1.1 billion valuation). By that point Spot had entered commercial sales in over 40 countries; IEEE Spectrum reports several thousand Spot robots are currently in active industrial deployment worldwide. Boston Dynamics officially unveiled the commercial electric Atlas at CES in January 2026, with first deliveries to Hyundai’s Robot Metaplant Application Center (RMAC) and Google DeepMind scheduled later in 2026.

Google’s 2026 collaboration with Boston Dynamics is, in essence, a “re-binding without equity” — Google need not reclaim ownership to embed itself in Boston Dynamics’ product value chain by supplying the most critical AI software layer. Per CNBC’s March reporting, Google DeepMind had already signed cooperation agreements with Apptronik and Agile Robots, and in February 2026 folded its Intrinsic robotics software unit into the Google mothership. CEO Wendy Tan White positioned Intrinsic as “the Android of robotics” — a foundational OS open to all robot hardware manufacturers.

The logic is clear-cut: Google’s 2013 attempt at vertical integration failed. Now it is pursuing a horizontal platform strategy — not building robot hardware, but becoming the brain inside every robot. Opening ER 1.6 via API means any hardware maker can plug into Google’s reasoning engine — exactly the Android smartphone playbook of open software to capture ecosystem coverage while monetizing in the cloud via Vertex AI.

III. Market Structure: The Current Scale and Future Runway of Physical AI

Industrial Robotics Base Market: Per the International Federation of Robotics (IFR), global installations exceeded 542,000 units for the fourth consecutive year in 2024, with a market value of approximately $16.7 billion. This is the installed-base foundation Physical AI is penetrating.

Physical AI for Industrial Robotics (the intelligence overlay layer): Market size was approximately $8.6 billion in 2025. MarketIntelo projects growth to $117.4 billion by 2034 at a CAGR of 34.7% — one of the highest-growth tech sub-sectors in the period.

Broad Physical AI (autonomous vehicles, humanoids, intelligent infrastructure): Future Markets estimates the global Physical AI market at roughly $383 billion in 2026, projected to reach $32.6 trillion by 2040.

The defining feature distinguishing Physical AI from digital AI is that competitive outcomes remain unsettled. In large language models, the top tier has largely crystallized (OpenAI, Anthropic, Google). In Physical AI, given the extreme heterogeneity of industrial settings, no company has yet established general manipulation capability replicable across contexts. Early movers’ data and deployment advantages will compound into a flywheel effect: more deployments, more real-world data, stronger models, higher barriers to entry.

Industrial inspection has emerged as one of the first Physical AI segments to achieve scaled commercial deployment — and it is Spot’s core use case. The robot’s value proposition is well validated: reducing personnel exposure to hazardous zones, enabling 24/7 continuous inspection, and supporting predictive maintenance. ER 1.6’s 93% instrument-reading accuracy directly unlocks analog gauge interpretation tasks previously reserved for human specialists — a critical lever for Spot’s land-and-expand strategy with existing customers.

IV. Competitive Matrix: The Three-Way Rivalry Among NVIDIA, Tesla, and Google

NVIDIA (Isaac Platform): The acknowledged current leader. Architecturally it pairs a Cosmos-Reason VLM (Transformer) with a 32-layer Diffusion Transformer — the former for scene understanding and task planning, the latter for continuous motion generation. Isaac GR00T N1.6 was announced at CES in January 2026; Isaac Sim and Isaac Lab form a complete simulation-training-deployment loop. The Isaac partner ecosystem spans over 1,200 companies and more than 400 North American automation solution providers. NVIDIA’s GPU standard at both ends of model training and real-time inference constitutes an unmatched ecosystem moat. At GTC 2026, NVIDIA positioned Physical AI as the next trillion-dollar incremental opportunity.

Tesla (Optimus + FSD Data Flywheel): Equally grounded in Transformer architecture, Tesla ports the visual perception and edge-inference capabilities built on FSD’s hundreds of billions of real-world driving miles into the humanoid context. Optimus is already performing tasks inside the Texas Gigafactory, though scaled commercial deployment remains distant. Tesla’s structural advantages are dramatically lower hardware marginal cost (driven by vehicle manufacturing scale) and its proprietary AI5 chip. Its structural weakness is near-zero ecosystem openness — a fully closed vertical integration stack.

Google DeepMind (Gemini Robotics + Intrinsic + Open API): Google’s path is open platform + reasoning-first + strategic partnerships. Opening ER 1.6 via the Gemini API expands deployment footprint at near-zero marginal cost while monetizing through Vertex AI. Its partnership network covers Boston Dynamics (industrial inspection), Apptronik (humanoid robots), and Agile Robots (European manufacturing).

All three are Transformer-based; the core differences are not in architecture but in reasoning strategy and ecosystem model. The competition ultimately reduces to one question: what constitutes the primary moat in Physical AI — hardware vertical integration (Tesla), compute ecosystem (NVIDIA), or an open reasoning software platform (Google)? The historical analogy is the mobile internet’s three-way contest among Apple (vertical), Qualcomm (chip platform), and Android/Google (open ecosystem) — Android won the global shipment war through ecosystem coverage, even as Apple captured premium profits. Whether Physical AI will reprise that history is the most consequential industry thesis to track over the next five years.

V. Google’s Grand Design: How Far Can the “Robot Android” Go?

Software Infrastructure (Intrinsic): In February 2026, Alphabet formally folded Intrinsic into Google proper, granting it direct access to Gemini models and DeepMind research. Intrinsic’s mandate is to supply a universal SDK and Digital Twin infrastructure to all robot manufacturers, compressing development cycles from months to weeks. Per CNBC, Intrinsic will also help Google build its own data centers — meaning robotics technology creates direct value for Google’s largest capital expenditure, forming a distinctive internal use case.

Hardware Partner Ecosystem: Beyond Boston Dynamics, Google’s Apptronik partnership was announced in early 2025 (building the next-generation humanoid on Gemini 2.0); its Agile Robots collaboration was announced in March 2026 to target European manufacturing. This strategy achieves cross-platform model training data reflow without Google building any hardware business — every Gemini Robotics-equipped robot feeds real-world manipulation data back to Google, reinforcing the iteration flywheel.

Cloud Monetization via Vertex AI: Enterprise-scale ER 1.6 deployment will almost invariably depend on Google Cloud. Per previews from Google Cloud Next (April 22–24), ER 1.6 will be commercialized as a new Vertex AI service. Every API call from a connected robot is a billable Google Cloud request — a highly predictable revenue structure that scales naturally with the installed base of industrial robots.

Google DeepMind specifically highlighted ER 1.6 as “our safest robotics model to date”, demonstrating superior safety-policy compliance on adversarial spatial reasoning tasks. This claim landed in the same week Anthropic’s Mythos raised significant alarm among Washington policymakers and the cybersecurity community. As AI safety becomes an increasingly decisive criterion in government procurement and enterprise compliance, front-loading Physical AI safety as a core selling point is a deliberate strategic narrative for Google’s public-sector clients.

VI. Risks: Can the Moat Withstand Low-Cost Disruption?

Price Pressure from Chinese Manufacturers: Companies such as Unitree Robotics and Fourier Intelligence are launching quadruped and humanoid robots at one-fifth to one-tenth of Spot’s price. The Unitree Go2 Education version retails at roughly $16,000, far below Spot’s $75,000 starting price. Whether software-intelligence premiums can offset that hardware gap — especially in emerging markets — will be a protracted commercial validation question. China’s domestic AI stack (Baidu ERNIE, Alibaba Qwen, Huawei Pangu) is also rapidly building its own embodied intelligence layer; geopolitical fragmentation may substantially undercut the “one model for all the world’s robots” vision.

Data Acquisition Speed Gap: DeepMind’s reasoning-first architecture requires a continuous flow of real-world deployment data to keep improving, while Tesla — with millions of vehicles on roads — has built an effectively insurmountable advantage in data velocity. Boston Dynamics operates several thousand Spots globally; Tesla’s fleet data pipeline runs at the scale of tens of billions of miles. This asymmetry is the deepest structural constraint on Gemini Robotics closing the gap with Tesla in general manipulation.

Enterprise Procurement Cycles: Industrial robot procurement takes 18 to 36 months, involving safety certifications (CE, UL, ISO), plant retrofit costs, and labor agreements. Even if ER 1.6 is technically mature today, converting an API launch into scaled enterprise revenue will require another two to three years.

VII. Historical Precedent: Where Will Physical AI’s “Android Moment” Arrive?

The mobile internet of 2008 offers an instructive analogy. Nokia dominated hardware shipments; Apple had just disrupted the paradigm with the iPhone; Google countered with Android as an open ecosystem. Google never became the largest handset manufacturer — yet Android ultimately captured over 72% of global smartphone shipments, and Google controlled the entire mobile internet gateway through its OS.

Google’s current Physical AI positioning — Gemini Robotics API (the Android OS) + Intrinsic SDK (the AOSP open-source framework) + Google Cloud (GMS services) + hardware OEM partnerships — bears a striking structural resemblance to the Android era. The one critical difference: Physical AI application scenarios are far more fragmented and customized (industrial, medical, warehousing, and residential each have radically different requirements), meaning the rollout will be far slower and less uniform.

The Agentic AI Foundation (AAIF), established under the Linux Foundation in December 2025 and anchored by Anthropic’s MCP protocol, OpenAI’s AGENTS.md, and Block’s Goose framework, provides an embryonic interoperability standard for Physical AI. If Gemini Robotics API becomes the default reasoning backend for that standard, Google’s leadership position will rest on ecosystem-level support rather than single-company technical superiority alone.

VIII. Investment Implications and Forward-Looking Observations

Near-Term (0–6 months): Google Cloud Next (April 22–24) will disclose ER 1.6 Vertex AI enterprise pricing and SLA terms — a key data point for assessing whether Google can convert model capability into cloud revenue. Boston Dynamics’ renewal rates and new-scenario expansion among existing customers will provide early commercial validation.

Medium-Term (6–18 months): Enterprise contracts and annual contract value (ACV) in industrial inspection and warehouse automation; third-party hardware integrations on the Intrinsic platform; Google’s head-to-head win rate against NVIDIA’s Isaac ecosystem in overlapping customer accounts.

Long-Term Structural Question: Will Physical AI value accrue primarily to the software layer (Google, NVIDIA) or the hardware layer (Boston Dynamics, Tesla Optimus)? History suggests hardware margins compress before software platform margins do in every major platform transition. If that dynamic recurs here, Google’s API platform path should command structural pricing power on a five-to-ten-year horizon. If highly customized industrial scenarios require tightly integrated hardware-software stacks to meet reliability thresholds, Tesla’s vertical model will prove more resilient.

Whatever the outcome, Gemini Robotics-ER 1.6 marks a genuine historical inflection point. Google sold Boston Dynamics in 2017 at a distressed valuation, effectively conceding it could not yet fuse AI with robot hardware. Nine years later — with LLM reasoning having evolved from language tasks to reading industrial pressure gauges in real factories — Google is reasserting its claim to sovereignty in the robotics era, this time through software rather than hardware. Its weapon is not capital equipment but the world’s largest AI reasoning engine and the ecosystem built around it. History never repeats exactly, but it often rhymes — on this still-unsettled frontier of Physical AI, Google’s bid for dominance may only be getting started.