HBM Supercycle: Control the Memory, Control the Future of AI

The Financial Transformation of SK Hynix, Samsung, and Micron — and the Reshaping of Capital Markets

April 27, 2026

1. Market Cap Transformation: The AI Bull Market’s Biggest Winners

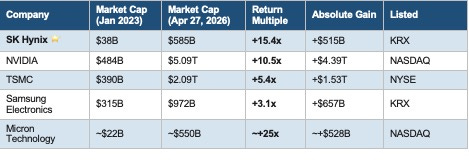

The launch of ChatGPT in early 2023 triggered an arms race in AI compute infrastructure. Between January 2023 and April 2026, the semiconductor supply chain underwent an epic revaluation. NVIDIA rose 10x; TSMC nearly 5x. Yet the highest-returning major semiconductor name was neither the GPU giant nor the foundry king — it was a Korean memory company most investors had never heard of: SK Hynix.

From a $38B market cap in January 2023 to approximately $585B on April 27, 2026, SK Hynix delivered a +15.4x return — surpassing NVIDIA (+10.5x), TSMC (+5.4x), and Samsung (+3.1x). On raw multiples, Micron’s ~+25x leads the group, but that figure is heavily distorted by a historically depressed base: the company was deep in the red in January 2023, with a market cap of only ~$22B. Stripping out that base effect, SK Hynix’s +15.4x from a normal starting point represents the most structurally meaningful re-rating of the AI supercycle.

Table 1 — Semiconductor Market Cap Comparison: Jan 2023 → Apr 27, 2026

Note: Micron’s Jan 2023 market cap is an estimate; the company was at a cyclical trough. Measurement period: January 2023 → April 27, 2026.

1.1 Why SK Hynix Outperformed

Three factors combined perfectly. First, pure-play exposure: SK Hynix is essentially a pure DRAM/NAND company, meaning every dollar of AI premium flows directly into its equity value with no dilution from smartphones, appliances, or foundry services. Second, near-monopoly pricing power: it served as NVIDIA’s preferred HBM supplier across the H100, H200, and Blackwell platforms, with HBM commanding 5–8x the price per bit of standard DRAM. Third, sold-out capacity visibility: in a market that prizes certainty above all else, the ability to say “our entire 2026 HBM capacity is 100% committed” justified a sustained premium multiple.

Samsung’s +3.1x — roughly one-fifth of SK Hynix’s gain — reflects two structural drags: extreme business diversification (semiconductors, smartphones, appliances, displays, and foundry together mean AI chip exposure accounts for only ~40% of enterprise value) and a high-profile stumble in HBM (Samsung’s HBM3E failed NVIDIA’s qualification testing in 2024, sending the stock down more than 30% from its peak while SK Hynix surged).

Micron’s eye-catching absolute multiple is almost entirely a recovery story from an unusually distressed base, amplified by the fact that AI demand had not yet been priced into U.S. memory stocks as of early 2023.

2. HBM: The Scarcest Strategic Resource of the AI Era

2.1 Why Memory Became AI’s Binding Constraint

GPU compute has grown exponentially over the past decade; conventional DRAM bandwidth has not kept pace. Training and inference on large language models are, at their core, dense cycles of matrix multiplication — and compute units sit idle waiting for data to arrive. This is the “memory wall”: bandwidth, not compute, is the true ceiling on AI performance.

High Bandwidth Memory (HBM) solves this by stacking multiple DRAM dies vertically and co-packaging them on the same substrate as the GPU. Bandwidth density improves by more than 10x versus conventional DRAM, while energy per bit drops sharply. The peak throughput of a Vera Rubin server rack is determined in large part by the quality of the sixteen HBM4 stacks it carries.

The 2026 Stanford AI Index reports that frontier models have crossed 50% on the hardest human benchmarks, up from 8.8% just a few years ago. That capability leap maps directly, on the hardware side, to the generational bandwidth step from HBM3E to HBM4. When people debate the ceiling of AI capability, one of the binding constraints is being manufactured right now in cleanrooms in Icheon and Cheongju, South Korea.

2.2 HBM4: An Architectural Paradigm Shift

The sixth-generation HBM4 represents the most aggressive architectural leap to date. The data interface widens from 1,024 lines (HBM3E) to 2,048 — think of doubling the number of highway lanes. Per-stack bandwidth reaches 2.0–3.3 TB/s, and a full Vera Rubin GPU card with sixteen HBM4 stacks exceeds 24 TB/s of total memory bandwidth.

More importantly, HBM4 introduces a dedicated Logic Base Die — an intelligent control chip embedded at the bottom of the memory stack that routes and pre-sorts data before it reaches the GPU, effectively upgrading HBM from a passive storage medium to an active co-processor. Because this base die requires TSMC’s 5nm or even 3nm logic process (far more complex than the DRAM dies above it), the three HBM suppliers have taken meaningfully different paths.

All three — SK Hynix, Samsung, and Micron — are integrated device manufacturers (IDMs) with their own fabs, unlike fabless designers such as NVIDIA, Broadcom, or Qualcomm. But their base die strategies diverge sharply. SK Hynix has entered a deep “One-Team” partnership with TSMC, outsourcing the logic base die to TSMC’s 12nm process (with further node advancement planned). Samsung is pursuing full vertical integration — DRAM, logic base die, and advanced packaging all in-house — betting that Samsung Foundry can close the yield gap that cost it NVIDIA share in the HBM3E cycle. Micron designed its own internal base die to control costs and supply chain, but its advanced logic capabilities have historically lagged, which is one reason it missed qualification for NVIDIA’s Vera Rubin flagship platform in this generation.

The structural supply constraint underpinning this supercycle is fundamental: HBM manufacturing is extraordinarily complex (multi-layer 3D stacking with through-silicon vias), capacity expansion takes two to three years, and building a new cleanroom takes three to five years. Every unit of capacity shifted to HBM removes approximately three units of supply from the consumer memory market. That supply inelasticity is why this upcycle has proven far more durable than the 2018–2022 commodity memory cycles.

3. SK Hynix: From Picks-and-Shovels to the Dominant Force of the AI Era

If NVIDIA is selling the weapons in this AI arms race, SK Hynix is selling the shovels — and it is the only shovel almost everyone has to use.

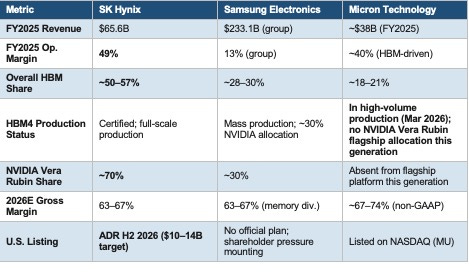

3.1 Financial Breakout: Margins That Beat TSMC

In 2023, SK Hynix posted an operating loss of $5.2B and a net loss of $6.1B — the deepest trough in the memory cycle. Just two years later, 2025 operating profit reached $31.9B, with an operating margin of 49% and a net margin of 44%. For the first time in history, SK Hynix surpassed Samsung Electronics to set an all-time profitability record among Korean-listed companies.

The quarterly acceleration is even more striking: Q4 2025 revenue of $23.6B, operating profit of $13.0B, and an operating margin of 58%. For 2026, the entire year’s HBM supply is already sold out; HBM3E contract prices have risen approximately 20%; and HBM’s share of DRAM revenue has climbed from roughly 5% in 2022 to over 50% in 2025. Blended gross margins for the three memory suppliers are expected to reach 63–67% in 2026 — which would, for the first time, put memory margins above TSMC’s (~60%). Advanced server memory modules are already generating gross margins approaching 75%, outpacing many AI accelerator products.

NVIDIA has become SK Hynix’s single most important customer: contributing roughly 16% of total revenue in 2024, rising to approximately 27% in 2025. For the Vera Rubin platform in 2027, SK Hynix is reported to have locked in more than two-thirds of total HBM4 supply allocation.

3.2 Capacity Strategy: Cheongju to Indiana

SK Hynix’s core HBM production is concentrated at the M16 fab in Icheon and M15X in Cheongju, South Korea. The Cheongju M15X front-end facility (approximately $14B investment) came online ahead of schedule. The long-term Yongin semiconductor cluster (~$15B) begins phased production from 2027. In the United States, the Indiana advanced packaging facility (first-phase investment ~$3.8B) is specifically designed to serve NVIDIA’s domestic supply requirements and is timed to the Vera Rubin ramp. A ~$7.9B ASML EUV tool order is slated for delivery before 2027, targeting HBM4 high-volume manufacturing.

One additional catalyst for 2026 deserves attention: the OpenAI Stargate supercomputing project. SK Hynix has signed an HBM supply agreement for Stargate, with industry estimates suggesting the project could effectively double total industry HBM demand.

The TSMC alliance was given a public, visible endorsement on April 22, 2026, at TSMC’s North America Technology Symposium in Santa Clara. SK Hynix Chief Development Officer Ahn Hyun delivered a keynote address articulating a vision of “the integration of memory and logic,” and showcased the 16-layer, 48GB HBM4 product — with descriptions at the booth explicitly referencing TSMC advanced logic in the base die. This was the first time SK Hynix took the stage at TSMC’s own annual technology event to publicly anchor the alliance, marking the moment the One-Team relationship moved from a supply chain arrangement to a shared technology narrative. Also on display: the industry’s highest-capacity 256GB 3DS RDIMM, and the world’s first 64GB RDIMM built on the 1c-nm process node. Notably, the 16-layer HBM4 showcase signals that SK Hynix is not waiting for Samsung’s hybrid bonding challenge — it is already moving to compete on that exact battleground.

3.3 U.S. ADR Listing: The Biggest Semiconductor Capital Markets Event of 2026

On March 24, 2026, SK Hynix announced a confidential Form F-1 registration statement filed with the U.S. Securities and Exchange Commission (confirmed by Reuters, CNBC, and the company’s own regulatory filings). The company is targeting an ADR listing on a U.S. exchange by the second half of 2026, with Goldman Sachs, Citi, and JPMorgan as joint bookrunners. Based on the plan to list 2–3% of total shares, a source cited by Reuters indicated the offering could raise up to $14B — which would make it the largest U.S. listing by a Korean company in decades, eclipsing Coupang’s $4.6B IPO in 2021.

The strategic rationale is “Korea Discount” arbitrage. The same HBM pricing power and the same NVIDIA supplier position are worth fundamentally less on the Korea Exchange than they would be on NASDAQ — because of differences in investor base, disclosure standards, and market accessibility, not fundamentals. A U.S. listing is an attempt to get the world’s deepest capital market to price that competitive position fairly, while simultaneously opening a financing channel for more than $30B of capex already under construction or in planning.

The announcement had an immediate spillover effect: three days after SK Hynix disclosed the F-1 filing, Artisan Partners (holding ~0.7% of Samsung) sent an open letter to Samsung’s board calling on management to follow suit, arguing a U.S. listing would give Samsung access to American retail investors and meaningfully close its own valuation discount.

4. Samsung: The Price of Diversification — and a Strategic Comeback in HBM4

4.1 The Conglomerate Discount

Samsung Electronics reported FY2025 group revenue of approximately $233.1B — 3.6x SK Hynix — but achieved an operating margin of only 13%, versus SK Hynix’s 49%. In 2025, Samsung’s total operating profit ($29.4B) was surpassed by SK Hynix ($31.9B) for the first time in history. Looking at the DS (Device Solutions / semiconductor) division alone, operating profit was $16.7B — barely half of SK Hynix’s $31.9B.

Samsung’s business spans DRAM/NAND/HBM (~$97.9B revenue), Galaxy smartphones (~$76.9B), consumer electronics and displays (~$38.5B), OLED panels (~$24.5B, the largest supplier to Apple iPhone), and Harman audio (~$10.5B). In an AI-driven market, this conglomerate structure means Samsung cannot capture anything like the AI-pure-play premium that SK Hynix commands — the valuation discount is structural, not cyclical.

4.2 HBM: The 2024 Stumble and the 2026 Recovery

In 2024, Samsung’s HBM3E failed NVIDIA’s qualification process, effectively locking it out of the most critical segment of the AI memory market for the better part of a year. The stock fell more than 30% from its all-time high to a 52-week low of approximately ₩53,700 (~$36 at ₩1,480/USD) while SK Hynix continued to surge. That qualification failure is the single event most directly responsible for the divergence in the two companies’ share prices over the past two years.

The picture in 2026 looks substantially different. After NVIDIA raised its Vera Rubin pin speed requirement above 10–11 Gbps/pin, Samsung was actually the first supplier to achieve HBM4 qualification (beginning limited shipments on February 12, 2026, per Samsung and industry reports) and secured approximately 30% of NVIDIA’s HBM4 allocation for the Vera Rubin platform. For Samsung, this constitutes a meaningful strategic recovery in the highest-value tier of AI memory.

Samsung’s real strategic bet lies beyond HBM4, in 16-layer stacking (16-Hi). Its core differentiating technology is copper-to-copper direct bonding — Hybrid Bonding — which eliminates the solder bump structure entirely, enabling a thinner, lower-thermal-resistance manufacturing path for stacks beyond 12 layers. If Samsung clears NVIDIA’s 16-Hi HBM4 qualification in Q4 2026, today’s 70/30 split could face significant reversal on the Feynman platform in 2027–2028. Samsung remains the only one of the three that is truly fully vertically integrated (logic base die, DRAM, and packaging all in-house) — a full-stack IDM moat that is durable as long as internal process yields remain competitive.

Samsung also holds another card: approximately 60% of HBM3E supply for Google’s Ironwood TPU platform. Hyperscalers — Google, Amazon, Microsoft — are reluctant to concentrate HBM sourcing entirely in SK Hynix, giving Samsung a persistent strategic presence in the non-NVIDIA AI accelerator ecosystem.

4.3 U.S. Listing: Growing Pressure, Structural Complexity

Samsung has no formal NYSE or NASDAQ listing; U.S. investors can only access the stock through the OTC pink sheets (ticker SSNLF, with extremely limited liquidity) or via ETFs. The deep structural barriers to a U.S. listing include: the disclosure burden of approximately 1,600 subsidiaries; the chaebol governance structure (the Lee family exercises effective control through a complex web of cross-shareholdings) which creates inherent tension with institutional investor demands for reform; and exposure to shareholder class-action litigation and IP disputes under U.S. jurisdiction.

External pressure is nonetheless mounting. If SK Hynix’s ADR successfully closes in H2 2026 and achieves a meaningful re-rating, the probability of Samsung initiating its own ADR process in 2027–2028 rises materially.

5. Micron: The American Passport Is the Deepest Moat

5.1 From Commodity Hell to AI Profit Machine

No major semiconductor company has experienced a more dramatic reversal of fortune than Micron. In 2023, the company was hit with a Chinese government security review and a de facto sales ban in China, posting a net loss of more than $5.9B. By fiscal Q2 2026 (quarter ending February 2026), revenue was $23.86B — up 196% year-over-year — with GAAP net income of $13.79B, EPS of $12.07, and a beat of 38.79% versus consensus estimates. Management guided fiscal Q3 2026 revenue to $33.5B, a single quarter that would exceed the company’s total revenue from three fiscal years prior. Non-GAAP gross margins surged from roughly 22% in early 2025 to over 74% in Q2 2026 — a company record.

On March 16, 2026, at NVIDIA GTC 2026, Micron formally announced that its HBM4 36GB 12H had entered high-volume shipment, with packaging explicitly labeled “designed for NVIDIA Vera Rubin.” Technical specifications: pin speeds above 11 Gb/s, total bandwidth exceeding 2.8 TB/s (a 2.3x improvement over HBM3E), and greater than 20% better power efficiency. However, supply chain data from SemiAnalysis (February 2026, widely cited by Investing.com, Korea Economic Daily, and others) shows that NVIDIA’s flagship Vera Rubin platform (VR200 NVL72) sources HBM4 exclusively from SK Hynix (~70%) and Samsung (~30%), with zero allocation for Micron — the primary cause being that Micron’s internally designed base die failed to meet NVIDIA’s pin speed requirements. Samsung had already begun limited shipments to NVIDIA on February 12, weeks before Micron’s GTC announcement.

Micron’s HBM4 production is currently directed toward non-NVIDIA customers such as AMD’s MI400 series, and the company is expected to gain share in lower-tier Rubin variants going forward. Despite the flagship absence, Micron’s management confirmed in late March 2026 that its full-year HBM4 capacity is 100% committed under binding contracts. Its “American passport” strategic value — defense procurement eligibility, CHIPS Act subsidies — remains fully intact. The transition from “commodity cycle supplier” to “AI infrastructure strategic partner” is underway; it simply unfolds outside NVIDIA’s flagship ecosystem for this generation.

5.2 A $200 Billion National-Scale Bet

What has genuinely shaken the market is Micron’s commitment to invest approximately $200B in U.S. manufacturing and R&D over the coming years. But a critical point of context: today, more than 60% of Micron’s DRAM capacity sits in Taiwan, which remains its largest production hub. Its most advanced process nodes (1α, 1β, 1γ) are all manufactured in Taiwan, as is the bulk of its HBM production. In other words, Micron today is functionally a company “listed in America, manufactured in Taiwan” — it owns its fabs, unlike fabless designers, but its manufacturing center of gravity remains offshore. The $200B U.S. buildout is a long-term blueprint: Idaho ($50B, first fab targeting 2027 production), New York ($100B, up to four fabs, first by 2028+), Virginia ($5B, existing fab expansion), and domestic HBM advanced packaging capacity (~$45B, phased). Samsung and SK Hynix are also building in the U.S. — Samsung in Taylor, Texas; SK Hynix in Indiana — the entire memory supply chain is aligning with the strategic logic of the CHIPS and Science Act.

Government support is substantial: $6.4B in direct CHIPS Act grants, a 35% Advanced Manufacturing Investment Credit on all qualifying spend, and an additional $5.5B committed by New York State over 20 years. The underlying strategy has three pillars: (1) set a target of 40% of total DRAM output on U.S. soil, fundamentally reshaping the geopolitical risk profile of the supply chain; (2) U.S.-manufactured HBM is easier to qualify for Department of Defense and federal government procurement — a niche that SK Hynix and Samsung structurally cannot access; (3) the 2023 China ban demonstrated that Micron is a hostage in a U.S.–China tech decoupling scenario, making the domestic pivot strategically necessary regardless of cycle timing.

Perhaps the most counterintuitive insight here is that Micron’s deepest competitive moat may not be its HBM technology — it may be its American passport. In an increasingly weaponized technology geopolitical landscape, that is a credential that neither SK Hynix nor Samsung can replicate.

The bet carries real risk: the factory construction window (2026–2029) could overlap with the next memory down-cycle, forcing new capacity to find buyers in a softer demand environment. Micron’s stock has already pulled back sharply from its late-2025 highs, and the muted market reaction to guidance of $33.5B in a single quarter suggests investors are beginning to price a plausible narrative: the cycle peak may be approaching.

6. Competitive Landscape and the Supercycle Mid-Game

Table 2 — Three-Way Competitive Scorecard

6.1 Durability of the HBM Supercycle

The key structural difference between this cycle and prior ones: AI infrastructure capex is multi-year and non-discretionary. The four largest hyperscalers are collectively spending more than $200B on AI infrastructure in 2026 alone, with budgets growing each year. The global HBM market has expanded from $3.5B in 2023 to $34.5B in 2025; BofA projects $54.6B in 2026, and Goldman Sachs forecasts the market exceeds $85–90B by 2027. The three suppliers’ combined 2026 DRAM capex exceeds $61.3B — but building cleanrooms takes three to five years, leaving supply inelasticity structurally far below historical norms.

Risks are real. A slowdown in AI demand as frontier model training requirements plateau; pricing pressure from Chinese DRAM suppliers in the commodity segment (see Section 6.2); geopolitical concentration risk given the extreme dependence on Korea and Taiwan; and a wave of new capacity arriving in 2027–2028 across all three suppliers. Samsung’s full HBM4 comeback and Micron’s improving position will also erode SK Hynix’s monopoly premium over time.

6.2 China’s Catch-Up: Faster Than Expected, But the HBM Ceiling Holds

Beyond the three dominant players, one variable is taking shape at a pace most observers did not anticipate: China’s domestic HBM supply chain. The threat will not directly disrupt the HBM4 competitive landscape in the near term, but the speed of China’s catch-up has become an unmistakable medium-term supply-side pressure.

China’s two core HBM players occupy very different positions in the ecosystem. ChangXin Memory Technologies (CXMT, Hefei) is China’s largest DRAM manufacturer. According to reports from TechInsights and Tom’s Hardware in early 2026, CXMT is on track to begin HBM3 (8-layer stack) mass production by late 2026 — implying a three-to-four-year technology gap versus SK Hynix’s current HBM4 node. That gap sounds large, but the “10-year” lag that most analysts cited just two years ago has been closed dramatically. CXMT is expanding its Hefei capacity significantly and building a new HBM back-end packaging fab in Shanghai (Reuters, February 2026: targeting ~30,000 wafer starts/month initial capacity). The company is simultaneously pursuing an A-share IPO on the Shanghai Stock Exchange, targeting RMB 29.5B (~$4.1B) in proceeds to fund capacity expansion.

Yangtze Memory Technologies (YMTC, Wuhan) — China’s NAND flash leader — is taking a different route through its subsidiary Wuhan Xinxin Semiconductor (XMC), which is developing HBM packaging technology (through-silicon via process) with an initial capacity of approximately 3,000 wafers/month, primarily positioned as a packaging services provider in a vertical division of labor with CXMT.

China’s HBM ambitions face two fundamental binding constraints. First, the U.S. December 2024 export control package explicitly added HBM to the restricted list, simultaneously restricting equipment for TSV and related processes; embedded foreign service engineers have been instructed to exit. Second, the absence of EUV lithography creates a structural bottleneck below the 15nm node, and advanced HBM4 and beyond requires 1b/1c-nm class DRAM process nodes. In aggregate, CXMT’s HBM3 production — two full generations behind Samsung’s current HBM4 — is primarily aimed at serving domestic Chinese AI chip customers (Huawei Ascend, Cambricon, etc.) and poses no near-term direct threat to the three incumbents in the global HBM market. However, by 2028–2030, if China achieves meaningful domestic equipment substitution, the commoditization pressure from scaled Chinese DRAM and low-end HBM supply could exert significant downward pressure on global memory pricing. This is the longest-dated but most structurally important tail risk of the current supercycle.

Tight HBM supply will continue to sustain historically high profitability for all three incumbents through 2026–2028. The next structural inflection point is whether Samsung’s hybrid bonding technology delivers on its promise in 2027 — if it does, today’s competitive landscape faces deep reshaping. And the longer-term structural variable is whether China can achieve HBM low-end volume production at scale by 2028–2030.

Epilogue: After the Age of GPU Supremacy

While the three players compete for HBM4 share, someone is already watching the next war.

Professor Kim Jung-ho of KAIST (Korea Advanced Institute of Science and Technology) — widely known as the “father of HBM” — has put forward a prediction that sounds almost heretical when NVIDIA’s market cap sits near $5 trillion: the ultimate winner of the AI era is not the GPU. It is memory. And the architecture he envisions is not a minor adjustment — it is a fundamental inversion of the current power structure.

Today, GPUs integrate HBM: the memory is soldered into the GPU package, subordinate to the compute die. Kim’s thesis is that the polarity will reverse. Tomorrow’s architecture will see HBM and HBF integrate the GPU — memory becomes the primary chip, and GPU and CPU logic degrade into co-processing elements embedded within the memory stack. The center of gravity in compute shifts from “the chip that calculates” to “the substrate that stores and moves.” This is what a Memory-Centric computing architecture looks like: the storage medium — HBM for working memory, and next-generation High Bandwidth Flash (HBF, NAND-based high-speed storage) for long-term context — becomes the system’s core component, with GPU and CPU logic eventually absorbed as commodity modules inside the memory stack.

The underlying logic is not esoteric. As AI evolves from the “generative” phase toward “agentic AI,” models must process entire documents, video archives, and massive knowledge bases as unified context windows. The bandwidth and capacity requirements could grow by three orders of magnitude from today’s levels. Kim directly attributes AI’s persistent hallucination problem to insufficient memory capacity: because the context window cannot hold enough information, the model is forced to answer from an incomplete picture. Building a genuinely hallucination-free AI agent requires something close to “perfect recall” — and HBM alone, at its current trajectory, cannot deliver that.

Kim’s proposed solution is HBF (High Bandwidth Flash): replace the DRAM dies in the stack with NAND flash — the same technology used in smartphones and SSDs, which offers orders-of-magnitude more capacity but lower speed than DRAM — to achieve a quantum leap in storage density. HBM and HBF then form a two-tier memory architecture: HBM as the high-speed working cache for active computation; HBF as the vast reference knowledge base. SK Hynix moved first: in February 2026, it co-founded the HBF standardization consortium with SanDisk (U.S.), establishing the standard-setting agenda for the next generation. Samsung is following. Kim draws an explicit parallel to the early 2010s, when SK Hynix bet aggressively on HBM while Samsung hesitated — the former now sits at the top of the industry, while the latter spent years recovering lost ground. His timeline: HBF engineering samples by around 2027; first large-scale commercial adoption by Google, NVIDIA, or AMD as early as 2028.

This forecast deserves to be taken seriously — because Kim said the same thing about HBM a decade ago, and he was right. Looking back to 2013, no one believed memory would become the value core of an AI accelerator. Today, SK Hynix’s market cap has surpassed TSMC’s. If Kim is right again, then the HBM supercycle is merely the prologue to a larger paradigm shift. The inversion from “GPU integrates memory” to “memory integrates GPU” — reversing those six words — implies a wholesale reordering of the semiconductor value chain. When that day comes, the company that controls the storage will be the company that controls the future of AI.

All KRW figures converted at the prevailing rate of ₩1,480 per USD as of the date of writing. For research purposes only.