Inside Amazon’s $33 Billion Anthropic Bet: Capital, Compute, and the Real Strategic Logic

Amazon spent $33 billion not to buy Anthropic’s equity — but to lock in a ten-year, $100B-plus cloud contract. The more successful Anthropic becomes, the more it spends on AWS. The investment is the mechanism; capturing cloud revenue is the goal.

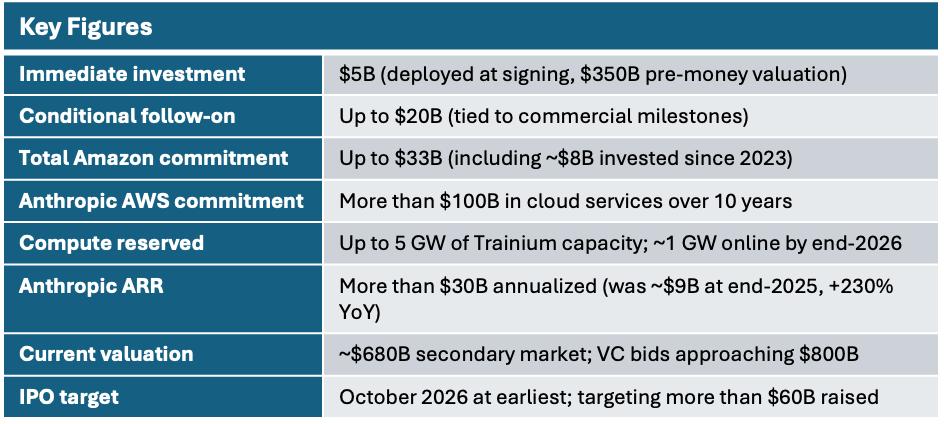

April 20, 2026. Amazon announced an investment of up to $25 billion in Anthropic, accompanied by a ten-year compute agreement under which Anthropic commits to purchasing more than $100 billion in AWS cloud services in exchange for up to 5 GW of guaranteed Trainium capacity. Combined with roughly $8 billion deployed since 2023, Amazon’s total committed capital reaches up to $33 billion — one of the largest single strategic investments in the history of the AI industry.

The Core Thesis

This is not a venture capital bet. The structure is closer to circular financing: Amazon puts money into Anthropic, Anthropic pledges to spend that money on Amazon’s cloud — the cash completes a loop. In substance, a supplier has prepaid a ten-year procurement contract for its largest customer.

Four observations define the deal’s significance:

• Circular financing. The net cash Amazon actually deploys is far below the headline $33 billion once Anthropic’s cloud spend is credited back. The same structure underpins the Microsoft–OpenAI and Nvidia–OpenAI relationships: cloud and chip vendors use investment to lock in AI companies’ largest cost line.

• AI infrastructure has entered a dual-lock phase. AI labs need compute; cloud providers need differentiated model assets. Within two months, Amazon completed symmetric strategic ties with both OpenAI ($50B investment + $100B AWS commitment) and Anthropic — a “back every top lab” strategy rather than a single-winner bet.

• Trainium gets its most important real-world validation. Anthropic already runs more than one million Trainium 2 chips — the largest deployment of Amazon’s custom silicon to date. A ten-year commitment provides credible long-run demand-side support for the Trainium roadmap, and is Amazon’s most substantive argument yet against Nvidia’s dominance in AI accelerators.

• Anthropic treats the deal as pre-IPO infrastructure insurance. Annualized revenue climbed from $1 billion to more than $30 billion in 18 months; compute has become the binding constraint on growth. The 5 GW reservation is essentially three years of pre-built moat for model training and inference capacity.

1. Deal Structure

1.1 Capital Tranches and Conditions

The $25 billion in new investment comes in two tranches. The first $5 billion was deployed at signing. The remaining $20 billion is conditional — tied to Anthropic’s commercial milestones and its rate of AWS consumption. If growth stalls, Amazon can hold back; if it accelerates, Amazon retains the right to continue deploying capital.

On valuation: Bloomberg reported that Anthropic confirmed the deal’s pre-money valuation at $350 billion, consistent with the pre-money pricing of the February Series G round that raised $30 billion and brought the post-money valuation to $380 billion. Sources citing “$380 billion” are referencing that post-money figure. Amazon is effectively entering at the same price as investors in the prior round. Secondary-market VC bids are approaching $800 billion — more than double the deal price.

Two parallel transactions — Amazon→OpenAI and Amazon→Anthropic — share the same economic logic: equity upside plus cloud revenue, with investment serving as the mechanism to secure the latter.

1.2 Compute: The 5 GW Commitment

At the center of the deal is a commitment to provide Anthropic with up to 5 GW of Amazon Trainium capacity delivered in phases. Trainium 2 ramps first, with a combined Trainium 2 and Trainium 3 capacity of approximately 1 GW expected by end-2026, scaling toward 5 GW thereafter. Anthropic will also receive tens of millions of Graviton CPU cores to support everyday inference workloads.

Anthropic in turn commits to running its large language models on AWS Trainium for the next decade, deeply entangling Claude’s training and inference with Amazon’s chip ecosystem. Switching costs are contractually embedded — competitors cannot simply undercut on price to win this workload back.

Last week, OpenAI publicly suggested that Anthropic was “operating on a meaningfully smaller compute curve.” The 5 GW lock-in is the most direct rebuttal Anthropic could have made. (GeekWire)

1.3 Distribution: Claude Native on AWS

The full Claude Platform will be available directly through AWS accounts — no separate Anthropic contract, no separate credentials, no separate billing. Enterprise customers access Claude’s full capability set through existing AWS IAM controls and consolidated invoicing.

In practice, this plugs Anthropic’s sales funnel into Amazon’s global enterprise customer network. The model mirrors the GPT–Azure native integration that has demonstrably reduced enterprise procurement friction for OpenAI. More than 100,000 companies are already building on Claude through AWS Bedrock; the deeper integration is designed to reduce any remaining switching friction. Lyft and Pfizer have been cited as representative early customers.

2. Anthropic’s Revenue: An Order-of-Magnitude Leap

2.1 From $1B to $30B in 15 Months

Anthropic’s annualized revenue (ARR) expanded roughly 30-fold from January 2025 to April 2026 — from $1 billion to more than $30 billion. The acceleration into 2026 is especially striking: the jump from $14 billion to $30 billion took approximately eight weeks.

Roughly 80% of revenue comes from enterprise customers, including eight of the Fortune 10. Claude Code is widely credited as the single most important driver of enterprise ARR growth, repositioning Claude from a chatbot into software-engineering infrastructure and producing a step-change in both daily engineer usage time and enterprise procurement priority. More than 1,000 enterprise clients now spend at least $1 million annually — a figure that doubled in under two months.

2.2 Anthropic vs. OpenAI

In absolute terms, Anthropic’s ARR (more than $30 billion) has crossed above OpenAI’s (approximately $25 billion as of April 2026). The comparison carries a caveat: Anthropic reports on a gross basis, while OpenAI reports net. On a like-for-like basis, the gap may be narrower. The directional signal matters more: Anthropic’s enterprise API market share rose from 24.4% to 30.6% in the past several months, with a reported 70% win rate among new enterprise buyers.

On valuation multiples: OpenAI trades at roughly 34x ARR ($852B post-money against ~$25B ARR); Anthropic on VC bids implies roughly 27x ARR ($800B against $30B). The discount partly reflects IPO timing uncertainty and OpenAI’s consumer brand premium — ChatGPT counts more than 900 million weekly active users.

3. Amazon’s Strategic Intent

3.1 Back Every Top Lab

Within two months, Amazon locked in large-scale strategic positions with both leading AI labs. In February it joined OpenAI’s funding round with $50 billion, alongside a $100 billion AWS commitment. On April 20 it closed this Anthropic agreement on equivalent terms. The underlying logic is infrastructure-layer thinking: regardless of which lab wins the model race, its training and inference will run somewhere in the cloud. Amazon aims to ensure that “somewhere” is AWS — the classic “sell shovels to every gold miner” approach, without having to predict the winner.

3.2 Trainium as a Credible Nvidia Challenger

Anthropic’s deployment of more than one million Trainium 2 chips is the most persuasive real-world evidence Amazon has produced for its custom silicon strategy — more compelling than any benchmark report. Amazon’s competitive thesis against Nvidia is not single-chip performance leadership but total cost of ownership through system-level co-optimization (Trainium + Graviton + Inferentia + Nitro), with Anthropic as an architecture co-design partner.

Trainium 3 is expected to reach volume production by end-2026. If Anthropic successfully trains its next-generation Claude models on Trainium 3, the narrative around Amazon’s ability to challenge Nvidia’s accelerator dominance will strengthen considerably. Notably, Anthropic has not abandoned Nvidia GPUs or Google TPUs — its 2026 Google TPU usage is also growing rapidly, reflecting a pragmatic multi-hardware strategy.

4. The Multi-Cloud Balancing Act

The deal is easily misread as exclusive AWS dependency. It is not. Anthropic maintains deep partnerships with all three hyperscalers simultaneously:

• AWS: Primary cloud and training partner (since 2023). Total commitment up to $33 billion.

• Microsoft Azure: Up to $5 billion investment + $30 billion compute commitment (November 2025).

• Google Cloud: Large-scale TPU agreement; Google holds approximately 14% of Anthropic and has invested roughly $3 billion in total.

This multi-cloud architecture gives Anthropic meaningful negotiating leverage and covers the world’s three largest enterprise IT procurement channels. No single provider failure creates a single point of risk for Anthropic.

The Amazon agreement’s “ten-year Trainium priority” clause effectively carves out an asymmetric advantage for AWS within that multi-cloud frame: AWS will capture the largest and fastest-growing share of Anthropic’s compute spend, while the other providers retain a meaningful slice. All three hyperscalers are thus caught in a competitive-cooperative paradox — rivals for enterprise AI cloud market share, yet co-investors in the same AI lab, each hoping Anthropic grows as fast as possible. The dynamic closely parallels TSMC’s position in the semiconductor industry.

5. Historical Context

5.1 How the Relationship Evolved

Amazon first invested in Anthropic in September 2023, committing up to $4 billion alongside designating AWS as Anthropic’s primary cloud partner — widely read at the time as a defensive move against the Microsoft–OpenAI alliance. Cumulative investment reached roughly $8 billion through 2024. As Anthropic’s revenue accelerated through late 2025 and into 2026, Amazon began reporting multi-billion-dollar paper gains on its Anthropic stake in quarterly earnings, materially lowering the psychological cost of further capital deployment.

5.2 The Microsoft–OpenAI Template — and Where This Differs

Microsoft invested roughly $13 billion across three rounds (2019, 2021, 2023), acquired approximately 27% of OpenAI’s new for-profit entity, and secured an exclusive Azure cloud agreement that embedded GPT capabilities across Office, GitHub, and Bing. The Amazon–Anthropic agreement follows the same playbook, with one key difference: Anthropic preserved a multi-cloud architecture and did not grant Amazon exclusivity. This reflects both Anthropic’s stronger negotiating position relative to OpenAI in 2019 and a structural shift in the AI ecosystem away from single-vendor lock-in. Amazon’s edge is concentrated at the compute layer (Trainium binding) rather than the platform layer (exclusive distribution).

6. IPO Outlook

6.1 October 2026 Window

Bloomberg reports that Anthropic is in early IPO discussions with Goldman Sachs, JPMorgan, and Morgan Stanley, targeting a listing as early as October 2026 that would raise more than $60 billion. The law firm Wilson Sonsini has been engaged for legal counsel.

The Amazon agreement provides three concrete supports for the IPO narrative: it eliminates investor concern about compute-constrained growth; the $100 billion AWS commitment offers unusual cost-structure transparency for a financial model; and Amazon’s willingness to deploy up to $25 billion more constitutes the most credible institutional endorsement of Anthropic’s commercial viability to date.

6.2 Indirect Public-Market Exposure

Until the IPO, institutional investors have two indirect routes. Alphabet (GOOGL) holds roughly 14% of Anthropic and reported approximately $10.7 billion in net gains on equity securities in Q3 2025, a significant portion attributable to Anthropic. Amazon (AMZN) has similarly recognized Anthropic-related equity appreciation in recent quarterly earnings. Both stocks effectively carry large embedded call options on Anthropic’s valuation.

Valuation reference points: at VC bids of $800 billion against $30 billion ARR, the implied multiple is roughly 27x; at the Series G post-money of $380 billion, it is roughly 13x. Fast-growing SaaS companies have historically traded at 10–20x revenue. Anthropic’s final IPO price will serve as a major anchor for how the entire AI software layer is valued in public markets.

7. Risks and Variables to Watch

7.1 Financial Structure

The $100 billion AWS commitment is a decade-long fixed operational cost. If model architectures shift dramatically — for example, if inference costs fall by an order of magnitude — Anthropic could absorb its commitments far more slowly than projected while the fixed obligations remain. The $20 billion conditional tranche also means that capital is not guaranteed if growth decelerates: manageable in the current acceleration phase, but potentially destabilizing if the industry cycle turns.

7.2 Regulatory and Geopolitical Exposure

The Trump administration has reportedly placed Anthropic on a federal agency restricted list, though multiple agencies continue active commercial engagements — reflecting Claude’s de facto indispensability in government AI applications. A deeper geopolitical risk stems from Anthropic’s new identity-verification policy, which requires certain users to provide government-issued photo ID to block access from China, Russia, and North Korea. While addressing compliance requirements, the policy may create differential access effects in international markets and trigger privacy-compliance debates.

7.3 Trainium Roadmap Risk

Anthropic continues to rely on Nvidia hardware for some critical training workloads; Trainium remains in transition. Whether Trainium 3 can be delivered at scale by end-2026 and match the training performance of H100/H200 is the central technical risk to the deal’s compute value proposition. A delay or performance shortfall would put Anthropic in a structural bind between insufficient compute supply and its contractual commitments.

Conclusion

The Amazon–Anthropic agreement is among the most consequential milestones yet in the AI industry’s transition from research competition to infrastructure war. Its significance lies not in the dollar figure but in the industrial logic it reveals: the competitive advantage of top AI labs increasingly depends on the scale and reliability of their compute supply; the core value of cloud providers increasingly depends on locking those labs onto their platforms.

Three signals are worth tracking over the next 12–18 months:

• AWS concentration in Anthropic’s compute mix — a rising AWS share would confirm the agreement’s gravitational effect.

• Trainium 3 volume ramp and workload migration — the decisive test of whether Amazon’s custom silicon bet has genuine competitive legs.

• Anthropic’s IPO pricing — the first major public-market anchor for AI software-layer valuations.

If the Trainium compute bet pays off, this deal will be remembered as the inflection point where the AI chip market’s single-vendor dominance began to crack. If it does not, it will still stand as an exceptional cloud revenue contract — and for Amazon, that may already be enough.