One Name, Three Ambitions: What AI Companies Reveal About Themselves Before You Even Log In

What you call something says everything about who you think should use it.

A while back, I had lunch with a friend who runs procurement at a mid-sized tech company. He mentioned they’d just finished evaluating AI tools for their team.

“Which one did you go with?” I asked.

“ChatGPT,” he said. “Honestly, the IT team made the call. Easiest to roll out — everyone already knows what GPT is.”

I asked if they’d looked at Claude.

He paused. “That’s the one with... Opus? Haiku? I genuinely didn’t know which version to pick, or what those words even meant.”

That comment stuck with me.

Names Are Never Just Names

There’s a principle in strategy consulting that goes something like this: a product’s name is the cheapest public statement a company will ever make about its intentions.

Cheap, because it’s just a word. Public, because everyone can see it. A statement, because it reflects the company’s internal answer to the most fundamental question any business faces: who is this for?

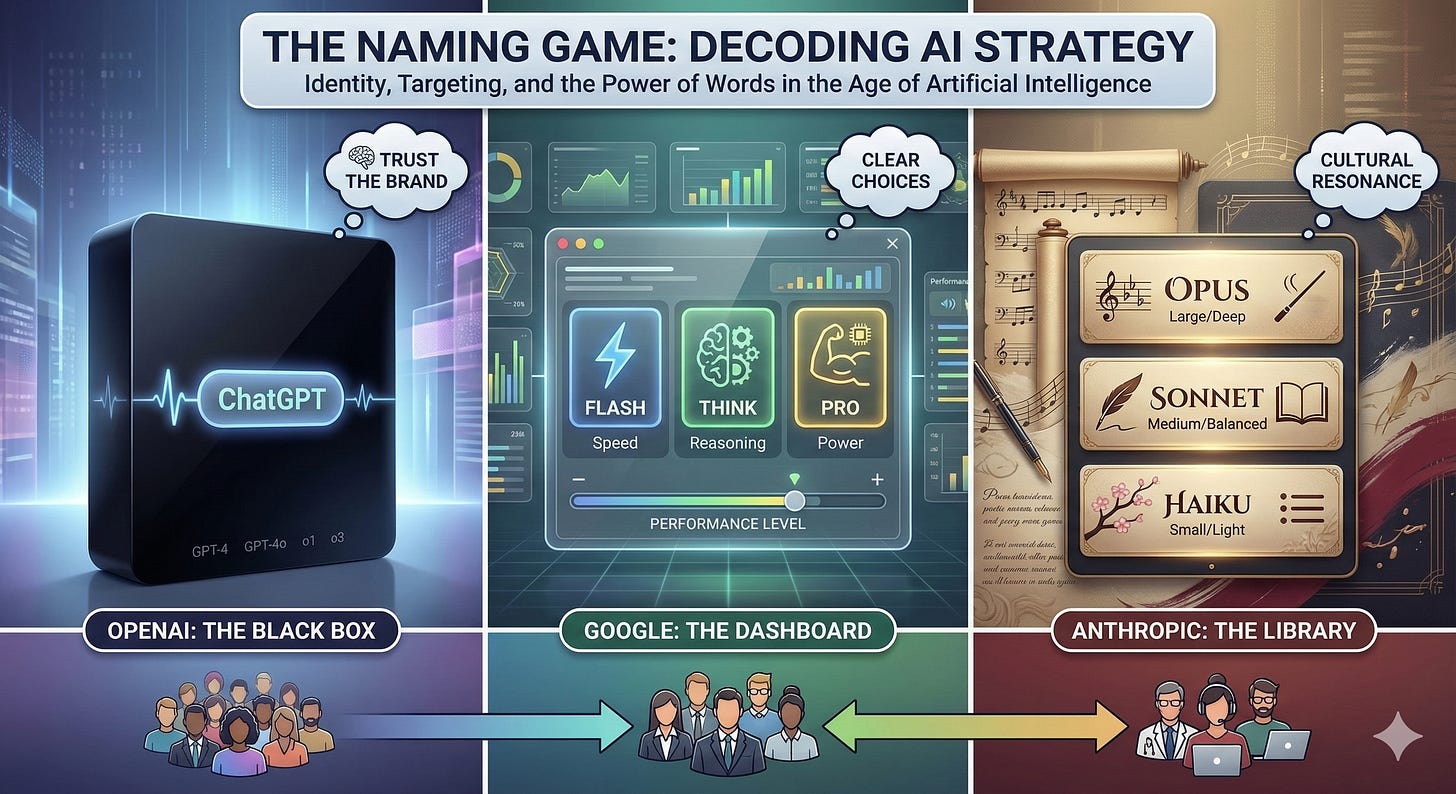

Seen through that lens, the naming conventions that OpenAI, Google, and Anthropic have chosen for their AI models aren’t just marketing decisions. They’re strategic manifestos — most people just haven’t bothered to read them.

OpenAI: Hide the Complexity. Trust the Brand.

Start with OpenAI.

ChatGPT. GPT-4. GPT-4o. o1. o3.

On the surface, these names feel utilitarian — almost engineer-brained. But that’s the point. The technical aesthetic signals authority: this is serious technology. You don’t need to understand it. You just need to trust it.

What’s easy to miss is that OpenAI didn’t start here. In its early days, the platform surfaced multiple distinct models — GPT-3.5, GPT-4, later GPT-4 Turbo — and let users pick between them. Pricing tiers were visible. Version differences were documented. It looked, briefly, a lot like what Anthropic does today.

Then OpenAI quietly reversed course. One by one, the explicit model choices were retired from the default interface. The selector shrunk. The branding consolidated around “ChatGPT.” The message shifted from here are your options to we’ve already chosen for you. It wasn’t a failure of nerve — it was a deliberate strategic decision made after watching how real users actually behaved. Most people didn’t want to choose. They wanted to be told they had the best.

That makes OpenAI’s current philosophy more interesting than it might appear. This isn’t a company that never knew better. It’s a company that learned — from its own user data — that simplicity at scale beats transparency at scale, and acted on that conclusion.

More importantly, OpenAI made a deliberate product decision to actively obscure model differences from ordinary users. Open ChatGPT and you see “ChatGPT.” Not o3. Not GPT-4o. Even if you’re running the latest and most powerful model, the interface won’t make a thing of it. Paying subscribers get a small dropdown if they go looking — but that’s opt-in, not the default experience.

The behavioral psychology here is precise. Barry Schwartz’s The Paradox of Choice documented what happens when you give people too many options: decision quality goes down, satisfaction goes down. OpenAI’s solution is to eliminate the choice entirely. Let users believe they’re always using the best. Don’t make them think about it.

The downstream effect of this strategy is something money can’t easily buy: “GPT” has become a genericized brand. In many non-English-speaking markets, people use “GPT” as a catch-all term for AI chatbots — the way Americans say “Google it” or ask for a “Band-Aid.” That kind of cultural penetration is vanishingly rare. Xerox, Google, Kleenex. The list is short. ChatGPT now processes over 2.5 billion prompts a day, a number that tells you everything about what mass-market simplicity can achieve at scale.

The underlying philosophy: internalize all complexity, expose only ease. Pure consumer-product thinking — the simpler, the better. The goal is a billion users who never need to think about what’s running under the hood.

Google: Give People a Choice — Just Make It Obvious

Google’s approach splits the difference.

Open Gemini’s consumer interface and you’ll find three options: Flash, Think, Pro.

Look at that word choice. Flash. Think. Pro. These are terms that require zero cultural context, zero prior knowledge, and translate cleanly into virtually any language. Want speed? Flash. Want reasoning? Think. Want more horsepower? Pro.

Unlike OpenAI, Google chose to make model differences visible. But it labels those differences with the plainest possible vocabulary — no background required to understand what you’re choosing.

This reflects something deep in Google’s DNA as a search company. Its entire business model is built on helping people navigate complexity, not eliminating it. The implicit message to users has always been: we’ll show you the options, but you’re smart enough to decide.

Google also knows its user base is as diverse as it gets — students and enterprises, sub-Saharan Africa and Northern Europe, a couple of billion people spanning every conceivable context. It needs a naming system that works for all of them while still giving enterprise customers the service-tier differentiation that procurement teams and IT departments require. Flash/Think/Pro threads that needle reasonably well.

The underlying philosophy: find the highest common denominator between transparency and accessibility. Show people the differences, but describe them in language anyone can understand. Commercial-friendly by design.

Anthropic: The Name as a Mirror of Values

Now for the most interesting case.

Anthropic. Claude. Opus, Sonnet, Haiku.

Unpack these one at a time.

Anthropic — derived from the Greek anthropos, meaning “human.” It’s not a word you encounter in everyday conversation. You need a passing familiarity with etymology, or exposure to academic concepts like the Anthropocene, to immediately grasp what the name is reaching for.

Claude — a tribute to Claude Shannon, the mathematician who essentially invented information theory. Shannon’s 1948 paper, A Mathematical Theory of Communication, laid the foundation for every digital technology that followed: the internet, mobile phones, modern computing, AI itself. It’s an elegant homage. But you have to know the history to feel the weight of it.

Opus, Sonnet, Haiku — this is where it gets genuinely clever. And the point of these three names is often misread.

They’re not a hierarchy of prestige across Western and Eastern traditions. They’re a function — specifically, a spectrum of volume and depth:

Opus is a large-scale musical work. Substantial. Complete. Built for tasks that require full depth and breadth. Sonnet is a 14-line poem with strict formal constraints — it achieves completeness within limits, a form defined by balance. Haiku is three lines, seventeen syllables: maximum meaning from minimum material. The most refined compression.

Large. Medium. Small. Deep. Balanced. Light. Three equal forms, each masterful in its own register — just operating at different points on the scale. The naming system encodes capability and efficiency together, without suggesting that any one of them is inherently superior.

What Does All of This Actually Reflect?

It’s tempting to read Anthropic’s naming as deliberate elite curation — a velvet rope disguised as vocabulary. But that reading is too mechanical.

The more accurate interpretation is that these names are simply an authentic expression of who Anthropic is.

The company was founded by former OpenAI researchers, many of them with backgrounds in academia and AI safety. These are people who default to precise language, who find meaning in intellectual lineage, who naturally reach for Shannon or Keats when they need a reference point. Naming their AI assistant after Claude Shannon wasn’t a positioning exercise. It was a genuine act of intellectual respect — the kind of thing that felt obvious to the people in the room.

The naming also reflects a deliberate market positioning. Anthropic made an early strategic choice not to fight for consumer dominance. Its target users — enterprise teams, developers, researchers — don’t just use AI. They need to understand what they’re using, why it behaves the way it does, and how to integrate it into complex workflows. For that audience, Opus/Sonnet/Haiku is actually quite clear: it immediately communicates “this is a family of tools with distinct capability profiles.”

The result is a kind of gravitational sorting. Users who are drawn to the naming tend to already be the users Anthropic most wants to serve. But that’s less a designed filter than a natural convergence — people with similar values, finding each other.

A Historical Parallel (With Caveats)

This dynamic has precedent.

In the 1980s and early 1990s, IBM owned enterprise computing. Mainframes, complexity, professional-grade everything — products designed for specialists, sold to organizations. Apple took a different path from day one: the Macintosh was named after an apple variety, introduced the graphical interface to replace command-line inputs, and was explicitly built for people who weren’t computer experts.

The rough parallel to today’s AI landscape is visible. IBM had brand recognition at a scale that dwarfed Apple’s. It served the market that paid the most. Apple built something less “enterprise-friendly” in the traditional sense and ended up with a user base that identified with it deeply.

The analogy has real limits, though. Apple eventually captured both markets — professional and consumer — and became the most valuable company in the world. That trajectory suggests that starting from a more selective position doesn’t have to mean accepting a permanent ceiling on scale.

More importantly, the pace of change is incomparable. AI model capabilities are iterating in months, not years. Competitive positions that seem durable today can look entirely different eighteen months from now. Whether Anthropic’s positioning will remain an asset as the market matures is still an open question — and a genuinely interesting one.

The Numbers Are Starting to Tell a Story

Theory aside, the market is offering early validation.

According to a July 2025 report by Menlo Ventures — based on a survey of 150 technical decision-makers — Anthropic holds 32% of enterprise LLM usage, ahead of OpenAI at 25%. Two years ago, that order was inverted: OpenAI commanded 50% of enterprise usage while Anthropic sat at 12%.

In code generation, the fastest-growing enterprise AI application, Anthropic’s share reaches 42% — double OpenAI’s 21%.

Revenue tells a similar story. Anthropic grew from roughly $87 million in annualized revenue at the start of 2024 to over $5 billion by mid-2025, one of the steepest growth curves in enterprise software history. Its business customer count grew from under 1,000 to over 300,000 in roughly the same period.

There’s a structural reason these numbers skew the way they do. Enterprise customers don’t switch easily. Once an organization has integrated an AI model into its workflows, compliance stack, and developer tooling, the cost of migration is substantial. Anthropic’s precise targeting means that when it wins a customer, it tends to keep them. Consumer-side users, by contrast, can jump from ChatGPT to Gemini and back with essentially zero friction — no contracts, no integrations, no institutional memory at stake.

It’s worth noting that OpenAI’s consumer dominance remains essentially unchallenged. More than 2.5 billion ChatGPT prompts per day is not a number Anthropic is approaching. These are not zero-sum strategies competing for the same pool of users. They’re playing in different layers of the same large market — and, for now, both approaches are working.

Three Bets, No Single Right Answer

The most important thing to resist here is a ranking.

All three approaches are rational. They simply reflect different bets about where the value in AI ultimately concentrates.

OpenAI is betting that AI becomes infrastructure — electricity-scale ubiquity. At that scale, every percentage point reduction in friction translates to hundreds of millions of users. Generic trademark status, once achieved, is nearly impossible to dislodge. You don’t un-become “the Google of AI.”

Google is betting that AI’s deepest commercial value lies in its integration into productivity tools and advertising infrastructure. It needs a large user base to fuel its data flywheel while maintaining legible service tiers for enterprise buyers. Flash/Think/Pro threads both needles simultaneously.

Anthropic is betting that as model capabilities converge — which they increasingly are — loyalty will be driven by identity alignment and deep integration rather than marginal capability differences. A smaller, more committed customer base may generate more durable value than a larger, more transient one.

These bets are not mutually exclusive. The AI market is large enough for all three to be right at once.

The Bigger Question

There’s a pattern here that extends well beyond AI.

Every company eventually faces the same fundamental question: do you optimize for reach, or for resonance?

OpenAI’s answer is reach. Anthropic’s answer is resonance. Google, as usual, is trying to have both.

There’s no universally correct answer. But the companies that try to be everything to everyone often end up being nothing in particular to anyone.

What Anthropic has done — naming its products after Claude Shannon, after musical forms, after ancient poetic structures — is make a statement about what kind of company it wants to be. Not the one that makes things simpler. The one that assumes a certain kind of user will come looking, and builds toward them.

Whether that bet pays off at the scale the company’s $380 billion valuation implies remains to be seen.

But the next time you open Claude and see Opus, Sonnet, and Haiku where a competitor would have written Flash, Think, and Pro — you’re watching a strategic philosophy render itself visible, one word at a time.

Naming is never just naming. It’s the cheapest, most public answer a company will ever give to the question: who, exactly, is this for?