The Death of Sora: When the Demo Meets the Balance Sheet

Where Is the Commercial Boundary of Video AI?

Sora didn’t die because the technology wasn’t good enough. It died because the technology was too expensive.

That is the starting point for understanding everything. It is the core logic behind OpenAI’s decision to shut down Sora on March 24, 2026.

I. Exit: How a Product Gets Crossed Off the Priority List

On March 24, 2026, OpenAI posted a brief announcement on X: “We’re saying goodbye to the Sora app.”

No lengthy explanation. No visible warning. OpenAI ended the lifecycle of the Sora standalone app with a single short statement. The manner of this exit is itself telling: it was not a slow marginalization but a swift deletion from the priority list.

According to The Wall Street Journal, Disney only learned of the closure less than an hour before the announcement was made public — despite months of ongoing negotiations. The two companies had signed a collaboration framework in December 2025 that would have licensed more than 200 IP characters from Disney, Marvel, Pixar, and Star Wars for use in Sora’s video generation. That deal was now void. No money had reportedly changed hands.

The Sora app is set to shut down on April 26, 2026, with the API following on September 24, 2026.

In retrospect, this ending was not surprising. The technical architecture and resource structure Sora depended on from the very beginning had already embedded the constraints that would eventually surface. The sheer noise of the demo just temporarily obscured them.

II. The Starting Point: A World-Shocking Demo — and What It Concealed

On February 15, 2024, OpenAI released a collection of demonstration videos generated by Sora.

A fluffy prehistoric mammoth trudging through snow, camera angles perfectly composed. A Tokyo street in winter, pedestrians moving with natural gait. A California Gold Rush scene that looked like archival footage, color-graded with period-appropriate grain. All of it generated directly from text descriptions, up to one minute long, at 1080p resolution.

Sora’s underlying architecture is a Diffusion Transformer (DiT) — combining diffusion models with a Transformer backbone that treats video as a sequence of spatiotemporal tokens rather than processing it frame by frame. This allowed the model to maintain physical consistency across frames: objects don’t vanish inexplicably, lighting follows real-world logic. OpenAI positioned Sora as a “world simulator,” a step toward understanding the physical world. At the time, that was not an overstatement.

The public response was extraordinary. Hollywood director Tyler Perry announced he was pausing an $800 million studio expansion. The Writers Guild and Screen Actors Guild now had a specific target for their anxieties. Sora became one of the most discussed AI products of 2024.

For an entire year, almost everyone discussing Sora had never actually used it. Every conversation was built on a handful of cherry-picked best-case outputs.

III. The Long Closure: How a First-Mover Advantage Consumed Itself While Waiting

After the February 2024 demo, OpenAI did not open Sora to the public.

For ten full months, Sora remained in a near-sealed state. Access was limited to a small number of “red team” safety researchers and a handful of invited creative professionals. Ordinary users — including paying ChatGPT Plus and Pro subscribers — could not touch it.

Sora finally opened to paying ChatGPT users on December 9, 2024. Ten months had passed since those demo clips first appeared.

Competition did not wait. Runway shipped new versions. Kuaishou’s Kling launched in June 2024. Google Veo kept iterating. ByteDance was deploying its own video generation capabilities. By the time Sora finally opened, its technical lead had been substantially eroded by competitors during those ten months of closure.

Sora’s first-mover advantage was consumed by its own waiting.

The reason for the delay was captured in a single line from OpenAI Sora lead Bill Peebles in October 2025: “Our GPUs are melting.”

IV. The Core Problem: Not “Is It Worth Using?” But “Can We Afford to Offer It?”

Most coverage of Sora’s failure has focused on user retention.

The numbers are real: after the Sora standalone app launched in September 2025, downloads peaked at roughly 3.3 million in November (combined iOS and Google Play), then fell to approximately 1.1 million within three months — a 66% decline. According to The Information, 30-day retention was under 8%.

But that is a symptom, not the cause.

The real problem is that the unit economics of this product never worked from the start.

The gap between cost and revenue was not a difference of magnitude. It was a difference of dimension.

According to estimates by financial analysis firm Cantor Fitzgerald (as reported by CNBC), generating a single 10-second video cost OpenAI approximately $1.30 in compute. At peak usage, The Wall Street Journal’s reporting showed Sora consuming roughly $1 million per day in compute — a run-rate of several billion dollars annually. Meanwhile, according to app analytics firm Appfigures, total revenue from in-app purchases across Sora’s entire product lifetime was approximately $2.1 million.

Understanding why requires understanding the fundamental compute asymmetry between text and video generation. Text generation outputs tokens sequentially — computation is relatively linear. Video generation requires every single frame to be computed independently, with temporal consistency across frames demanding additional attention mechanisms. The higher the resolution and the longer the duration, the more computation scales exponentially. A 10-second 1080p video contains approximately 240 frames, each requiring the model to “understand” the visual logic of what came before and after. This is not a problem engineering can shortcut — it is a physical property of the task itself.

For OpenAI, Sora wasn’t just consuming GPUs. It was consuming GPUs that could otherwise be running higher-revenue, higher-strategic-value workloads. Every unit of compute handling a Sora request was a unit unavailable to ChatGPT enterprise queries, Codex code generation, or API service. That opportunity cost grew rapidly as Anthropic pressed its attack in the enterprise market.

Low user retention wasn’t the cause of Sora’s failure — it was the result of a product that was never allowed to let users actually use it. A product throttled from day one due to insufficient compute cannot have high retention. That is an effect, not a cause.

V. Three Paths: How Compute Structure Determines Multimodal Strategy

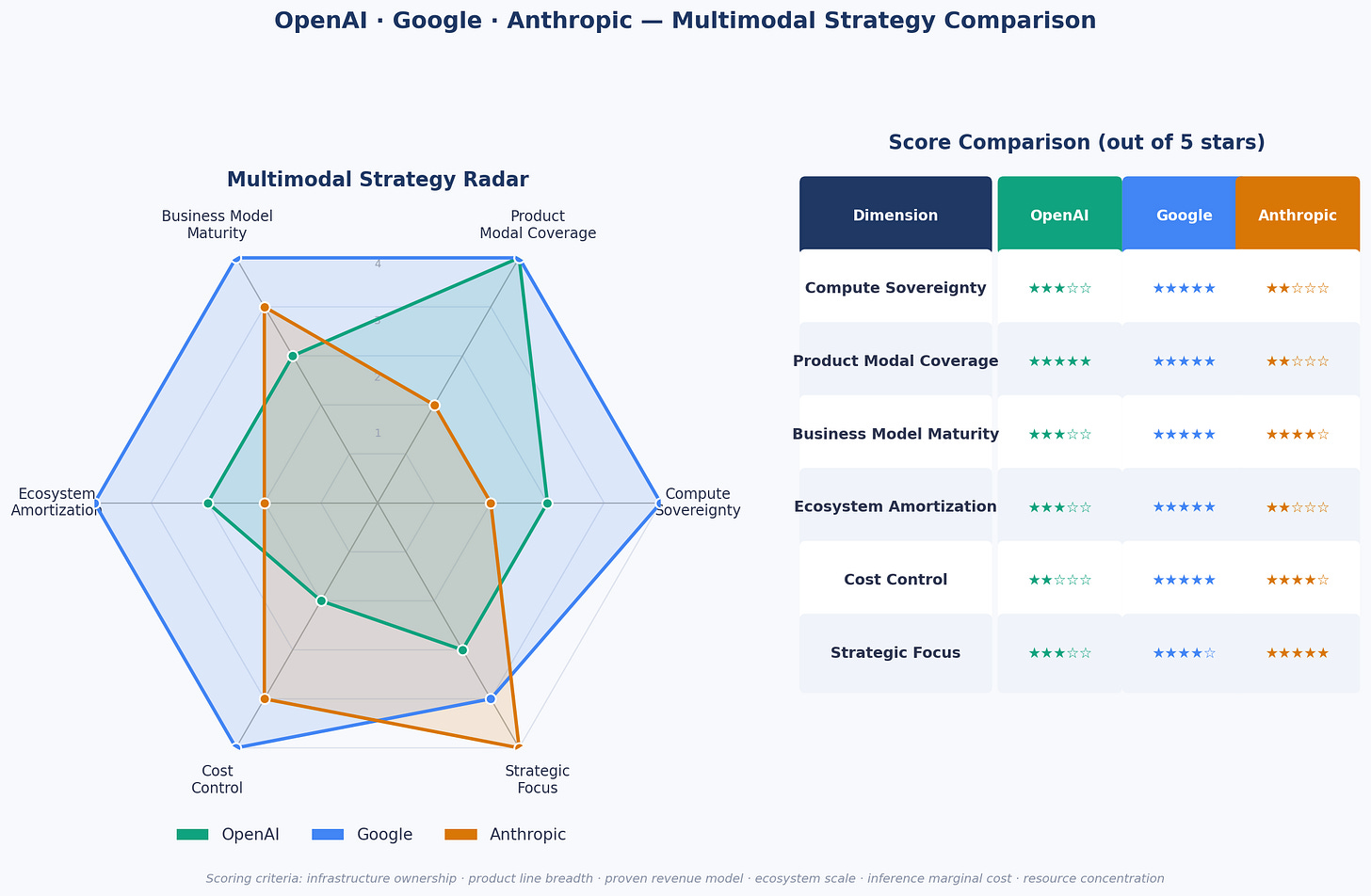

Sora’s fate is a key to understanding the current AI competitive landscape. The three major players — OpenAI, Google, and Anthropic — have taken three fundamentally different paths in multimodal strategy, each a direct reflection of their compute structure and strategic judgment.

OpenAI: Model Ambition Without Matching Infrastructure Sovereignty

OpenAI’s multimodal strategy has long followed a “do it all” logic: image generation (DALL-E / GPT-image), video generation (Sora), voice interaction, coding tools (Codex) — full coverage across every dimension, trying to establish presence everywhere.

Behind this posture is an implicit assumption: raise enough capital and you can burn your way to scale. But OpenAI doesn’t own its compute. It relies on purchased GPU capacity, primarily through its Azure contract with Microsoft. Every new product line competes for the same finite pool of compute.

OpenAI’s mistake wasn’t failing to see multimodal. It was treating multimodal too early as a “capability map” rather than a “resource allocation problem.” In a compute-constrained environment, full coverage means no single line can be executed to the highest standard. Closing Sora represents a forced contraction of OpenAI’s multimodal strategy: a retreat from text + image + video back to text + image, while redirecting compute toward Agentic AI — a strategic shift from “showing you impressive things” to “helping you actually get things done.”

Google: Full-Modal Possibility Through Infrastructure Hegemony

Among the three, Google is currently closest to a fully closed multimodal product loop: text (Gemini), video (Veo), image (Imagen), audio (NotebookLM) — a viable product in every direction.

This isn’t because Google’s model technology is inherently superior. It’s because its compute cost structure is fundamentally different. Google operates its own TPU (Tensor Processing Unit) clusters, purpose-built for deep learning inference and training. It doesn’t queue for Nvidia capacity or pay market-rate premiums for external compute. Its inference cost is the marginal cost of self-built infrastructure — not a price set by someone else. That difference, in the expensive arena of video generation, is precisely what determines what can be built and what can’t be afforded.

Google can charge enterprises $249 per month for Veo 3 while simultaneously offering per-second billing through Vertex AI. That flexible pricing structure represents pricing power built on a cost advantage — not subsidized by burning cash. OpenAI never reached that state with Sora.

Anthropic: Turning Voluntary Restraint Into Strategic Depth

Anthropic’s approach is the most “conservative” of the three in appearance — and possibly the most commercially clear-eyed.

As of 2026, Claude models cannot natively generate images, let alone video. This is not a gap in technical capability — code-level signals suggest relevant research exists internally — but a deliberate resource allocation decision. As an independent AI safety company, Anthropic has no TPU infrastructure and a funding base far smaller than OpenAI’s. With constrained compute, it must make hard choices: concentrate everything on the direction it judges to have the clearest commercial value — text reasoning, code generation, long-document processing.

What Anthropic is betting on is a time-bound judgment: in the current compute cost environment, the highest-value application of AI is improving the productivity of knowledge workers, not generating video or images. That judgment may not hold forever. But faced with the reality of Sora burning through its budget, it has at least proven defensible for this phase.

Figure: OpenAI · Google · Anthropic — Multimodal Strategy Comparison

VI. The Deeper Lesson: Demo Economics vs. Balance Sheet Economics

A demo that shocks the world and a sustainable commercial product are two completely different things.

Sora’s demonstration videos represented a genuine technical breakthrough. But part of what made the demos so shocking is that they were carefully curated best-case outputs — not average results. When Sora finally opened to the public in December 2024, users encountered a perceptible gap between the experience and those original clips.

More critically: even if the real product had fully matched the demo quality, the business model still wouldn’t have worked. A $1.30-per-generation compute cost cannot be recovered by consumer-tier subscriptions under current GPU supply and pricing conditions. Price subscriptions high enough to cover costs and the user base becomes tiny; a tiny user base means insufficient data to improve the model; without model improvement, costs can’t come down. It is a self-reinforcing trap.

Sora reveals a broader reality about the current AI industry: in the era of expensive compute, expanding multimodal capability is a game only a handful of companies can afford to play. Only companies with self-built compute infrastructure or massive ecosystems to amortize costs can sustain video generation at scale. Google is the former. Most companies — including today’s OpenAI — are renters of compute, not owners.

VII. Beyond the Boundary

After Sora’s closure, technical exploration in video AI has not stopped. OpenAI’s next-generation model (internal codename “Spud”) is in development. Google Veo continues to iterate. ByteDance’s Seedance 2.0 is expanding in China and select overseas markets, though blocked in the US by copyright disputes.

These developments confirm that video AI as a technical direction has not been repudiated. What has been repudiated is a specific business model: consumer-facing, unlimited-generation, platform-subsidized compute.

Until compute costs drop by an order of magnitude, that model will remain unviable. The compute cost reduction depends on chip process advances, the proliferation of inference-specialized hardware, and maturation of model compression — all underway, but slower than the growth of video generation’s compute appetite.

In the meantime, the business logic that can survive is more restrained: professional users, high-price coverage of high costs, strict usage caps. Not the narrative of “video AI disrupting Hollywood,” but the reality of “video AI becoming an expensive tool in the professional creator’s toolkit.”

Mass-market video AI is a genuine future direction. Just not yet.

OpenAI tried to use Sora to simulate the physical laws of the real world. In the end, it was struck back by the most ruthless law the real world has to offer: the physics of economics and compute.

Sora’s true legacy is not a collection of dazzling generated clips. It is an industrial boundary, verified at great cost: in the age of AI, being able to build something does not mean you can afford to offer it. And shocking the world does not mean you have a business.

Primary sources: The Wall Street Journal reporting on Sora’s closure, TechCrunch reporting, Cantor Fitzgerald analyst estimates (via CNBC), Appfigures app download and revenue data, OpenAI official statements. Some cost figures are external estimates, not figures disclosed by OpenAI.