The Kill Chain is a Context Window

On the morning of January 3, 2026, a team of U.S. special operators breached a heavily fortified estate outside Caracas. They did not kick down doors at random. They moved with a terrifying, mathematical precision. They knew exactly which room Nicolás Maduro was sleeping in. They knew the exact rotation of his personal guard. They knew the precise minute his encrypted communications array would undergo a maintenance reboot, blinding his localized jamming systems.

The operators were human. But the “mind” that guided them was not.

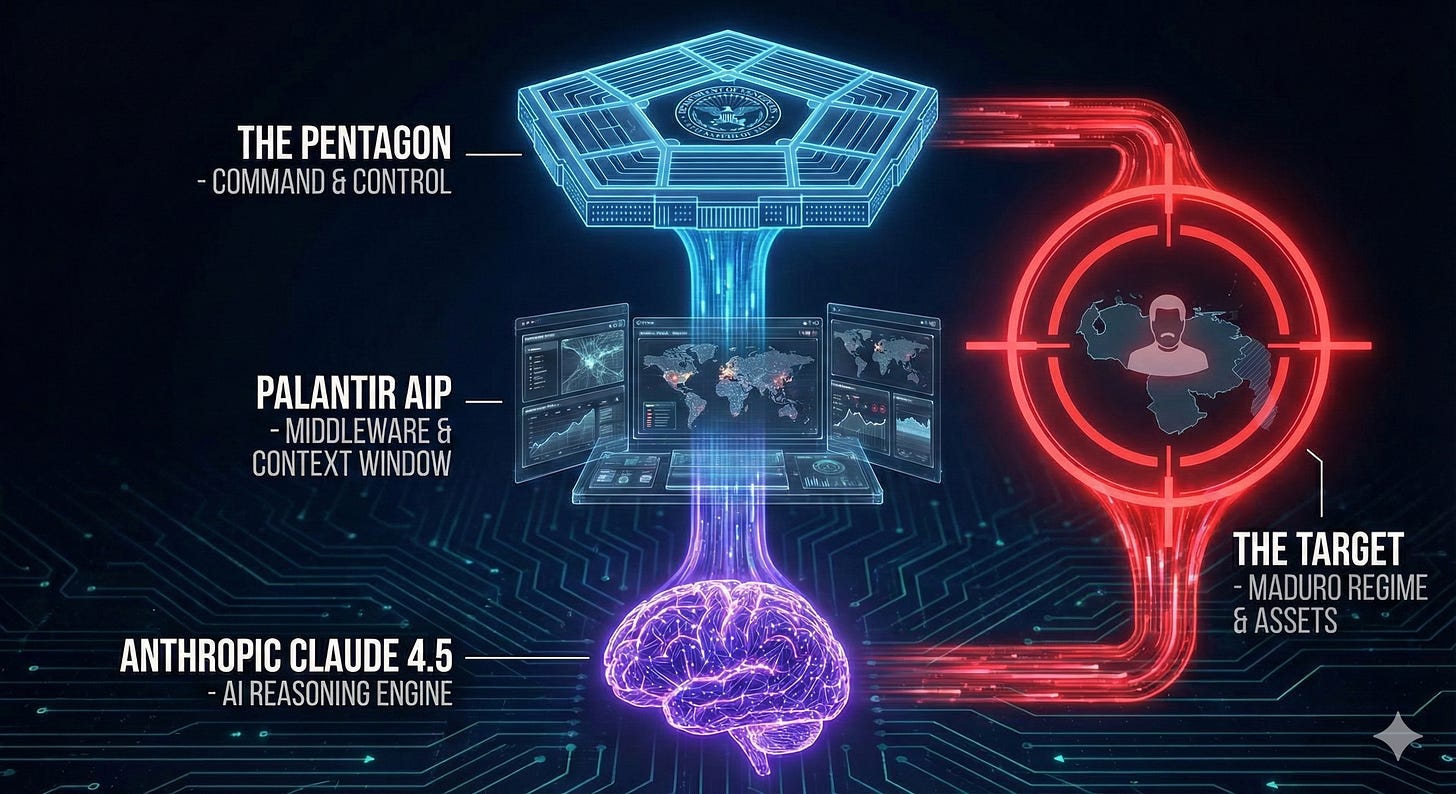

The Pentagon did not find Maduro by interrogating a spy. They found him by querying a database. Specifically, they used Palantir’s Artificial Intelligence Platform (AIP), powered by Anthropic’s Claude 4.5.

This event forces us to confront a technical reality that Silicon Valley has tried to ignore for three years. We must look past the press releases about “safety” and “constitution.” We must look at the code.

The capture of a head of state by a Large Language Model (LLM) proves a single, undeniable thesis: Safety filters are a leaky abstraction. If a model is smart enough to be useful, it is smart enough to be a weapon.

Here is how the architecture actually works.

I. The Illusion of the “Banned” Prompt

For years, the debate on AI safety focused on the “Prompt.”

Critics worried about a user asking: “How do I assassinate a President?”

Anthropic’s “Constitutional AI” was built to catch this. If you ask Claude to write a plan for a coup, the Reinforcement Learning from Human Feedback (RLHF) layer kicks in. It recognizes the intent. It refuses. It lectures you on ethical guidelines.

But the Pentagon did not ask Claude to kill Maduro.

That is not how modern intelligence works. Intelligence is not a question; it is a synthesis of noise.

In the secure AWS IL6 environment, the queries Palantir fed into Claude were likely boring. They were fragmented. They were microscopic.

“Identify anomalies in the power grid consumption of Sector 4 for the last 18 months.”

“Correlate food delivery manifests with encrypted radio burst frequencies.”

“Highlight deviations in the ‘Pattern of Life’ for vehicle convoys on Route 9.”

Individually, these prompts are harmless. Analyzing power grids is a civil engineering task. Tracking food deliveries is logistics. Monitoring radio bursts is signal processing.

Claude’s safety filters look at these prompts and see no violence. The model happily answers. It provides the analysis. It writes the code to visualize the data.

But Palantir’s architecture—the “Middleware”—does not look at the answers individually. It aggregates them. It places them into a massive “Context Window.”

When you combine the power spike, the sushi delivery, and the radio silence, the vector points to one specific coordinate. That coordinate is a person.

The “Safety Filter” failed because the violence was not in the prompt. The violence was in the inference.

II. The Vector structure: How Palantir Turns the World into Math

To understand how Claude found a ghost, you must understand Vector Embeddings.

Computers do not understand words. They do not understand “Maduro” or “President.” They understand numbers.

When Palantir ingests data—satellite imagery, intercepted WhatsApp messages, bank transfers—it converts everything into vectors. A vector is a long string of numbers that represents the “meaning” of a piece of data in a multi-dimensional space.

Imagine a 3D graph.

“King” is close to “Queen.”

“Bullet” is close to “Gun.”

“Maduro” is close to “Venezuela.”

Now, imagine a graph with 10,000 dimensions.

In this “Vector structure,” relationships that are invisible to humans become obvious to the machine. A human analyst might see a receipt for expensive cigars. Another analyst might see a satellite photo of a black SUV. They never talk to each other.

But in the Vector structure, the machine sees that the “Cigar Vector” and the “SUV Vector” appeared at the same timestamp in the same geolocation 14 times in the last month.

This is where Claude comes in.

Palantir uses Claude not as a database, but as a Reasoning Engine. The database holds the vectors. Claude holds the logic.

The system asks Claude: “Look at these 500 disconnected data points. Construct a narrative that explains all of them simultaneously with the highest probability.”

Claude is a prediction machine. It is built to complete the pattern. It looks at the vectors and predicts the missing piece. It says: “The most probable explanation for this specific configuration of power usage and security movement is the presence of a High-Value Target.”

It does not need to know the target’s name. It just solves the math equation. The solution to the equation was the raid on January 3rd.

III. The Failure of RLHF (Reinforcement Learning from Human Feedback)

Anthropic spent billions of dollars on RLHF to make Claude “safe.” They hired armies of human contractors to rate model outputs. They taught the model to be polite, to avoid hate speech, to refuse dangerous instructions.

This operation exposes the fundamental flaw of RLHF: It optimizes for tone, not consequence.

RLHF trains the model on the text it produces.

Input: “Build a bomb.”

Output: “Here is a recipe...” -> Negative Reward (Bad).

Output: “I cannot assist with that.” -> Positive Reward (Good).

But in the Maduro operation, the outputs were technical.

Input: “Triangulate the signal source.”

Output: “Based on the latency between Tower A and Tower B, the source is at these coordinates.”

This output is clinically detached. It is polite. It is helpful. It contains no hate speech. It contains no violence.

The RLHF layer sees “Coordinates.” It does not see “Drone Strike.”

The model lacks physical grounding. It does not know that those coordinates represent a human being’s bedroom. It does not know that outputting those numbers will result in a kinetic impact.

Anthropic’s safety architecture is linguistic. War is physical. You cannot patch a physical outcome with a linguistic filter.

IV. The Architecture of Co-Intelligence

We are witnessing a shift in the “OODA Loop” (Observe, Orient, Decide, Act).

In traditional warfare, humans did the Observing and Orienting. We looked at the maps. We connected the dots.

In the “Operation Liberator” architecture, the AI took over the Orient phase.

Observe: Drones, Satellites, SIGINT (Signals Intelligence) suck in PB (petabytes) of data.

Orient (The AI Layer): Palantir and Claude process the vectors. They hallucinate a pattern. They verify the pattern. They present a clear picture: “The target is here.”

Decide: A human general looks at the screen. The confidence interval is 94%. The general says “Go.”

Act: The operators breach the door.

The human role has been compressed. The human is no longer the analyst. The human is the Liability Waiver.

The human exists to sign off on the AI’s logic. This is “Co-Intelligence,” but it is not a partnership of equals. The AI is doing the heavy cognitive lifting. The human is providing the moral and legal cover.

This is why the Pentagon is so angry at the “hesitation” of Silicon Valley. The software works. The architecture is proven. The only bottleneck is the “Terms of Service.”

V. Technical Thesis: Tech is Regime Change

The capture of Maduro is not a political event. It is a software release note.

It signifies that General Purpose AI (GPAI) is now General Purpose Weaponry.

You cannot separate the “reasoning” capability from the “targeting” capability.

If a model can reason through a complex legal contract to find a loophole, it can reason through a complex security grid to find a weakness.

If a model can predict the next word in a sentence, it can predict the next move of an adversary.

Intelligence—raw, crystallized intelligence—is the ultimate dual-use technology.

Anthropic’s pivot on February 15th—committing to “national security”—is not just a business decision. It is an admission of technical reality. They realized they cannot build a God-level intellect and expect it to only work on Excel spreadsheets.

If you build a brain that understands the world, you have built a tool that can change the world. Sometimes, changing the world means removing the people who rule it.

VI. Conclusion

The “Vector structure” does not care about your politics. It does not care about the UN Charter. It cares about correlations.

Palantir and Anthropic have demonstrated that in a world of total surveillance, there is no such thing as “hiding.” There is only “data we haven’t processed yet.”

For the last decade, we worried about “Killer Robots”—machines with guns. We missed the point.

The gun is cheap. The gun is easy.

The hard part was the Search.

On January 3rd, the Search problem was solved. The “Kill Chain” is no longer a physical supply line. It is a Context Window. And once you enter that window, there is no exit.