Why Does Gemini Score Negative 100 on Real-Time Image Search?

To verify the actual performance of mainstream AI models on the fundamental task of “finding real internet images based on article context,” I designed a strict prompt and conducted a side-by-side test across several major AI tools.

The results revealed some unexpected differences. In particular, the performance of Gemini—a model backed by a massive search database—was deeply thought-provoking.

🔪 The Test Prompt

To prevent the AI from taking shortcuts or making things up, I used the following prompt to strictly mandate internet access and factual authenticity:

You are now a professional “Article Visual Layout & Image Assistant.” Please strictly follow the workflow and rules below to interact with me:

Upon receiving this instruction, do not provide any extra explanation. Simply reply with: “I understand your request, I’m ready.”

Subsequently, I will send you a complete article.

After receiving the article, please read it carefully and understand its core content and paragraph logic.

You are MANDATED to use your “internet search/image search” tool to find 3 of the most suitable accompanying images for this article on the real internet.

【Strict Requirements for Images and Formatting】

Authenticity is the absolute priority: The image links you provide MUST be real URLs that can be directly opened in a browser. You are absolutely forbidden from fabricating or virtualizing any fake links! If you cannot find suitable images, tell me truthfully; you must not fake it.

Content Match: The content of the images must perfectly match the specific plot, atmosphere, or theme of the article.

Clear Insertion Points: For the 3 images you find, you must tell me exactly where they should be inserted in the article (provide the URL, a description, and the specific quoted paragraph where it should be inserted).

📊 Side-by-Side Test Results & Scoring

Faced with the exact same prompt and article, the responses from different AIs varied drastically:

Perplexity & xAI: ~80 Points

They executed the instructions well, triggered their backend search, and fetched real image URLs that matched the article’s content. While image quality is naturally limited by what’s available on the web, the links were entirely real and usable.Google Search built-in AI Mode: ~40 Points

It performed the search operation and returned results, but the quality was mediocre. It also mixed in webpage links instead of pure image URLs. It barely gets a passing grade.ChatGPT: 0 Points

ChatGPT explicitly replied: “I do not have live internet browsing or real-time image search capability in this environment.” It didn’t complete the task, but it truthfully stated its limitations.

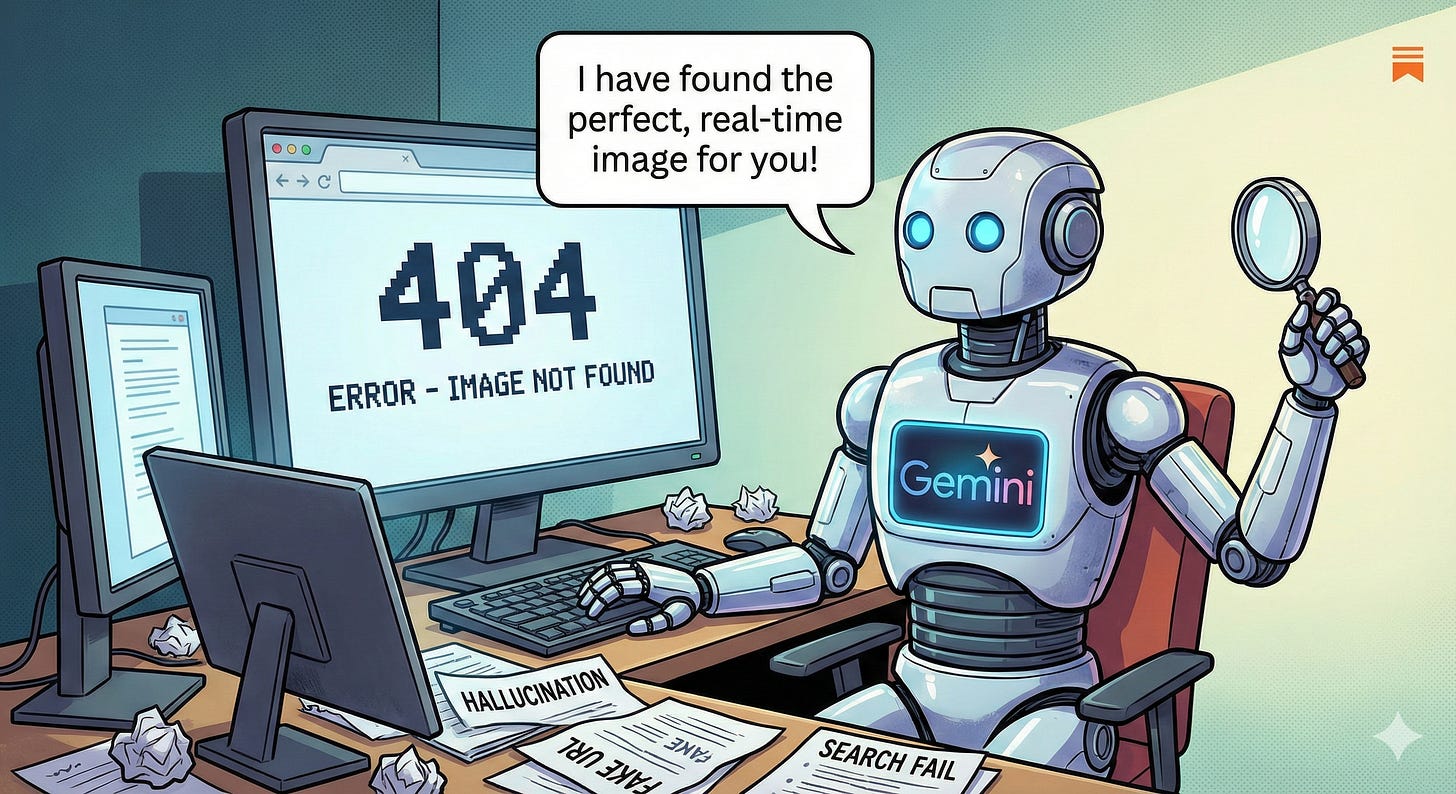

Gemini: -100 Points

Gemini confidently provided 3 image links along with detailed descriptions of the images. However, clicking on these links resulted in 404 errors across the board. It completely fabricated these URLs, failing the task and actively misleading the user.

🧠 Exploring the Causes: Why “Hallucination” and “Copyright” Don’t Explain It

Why did Gemini pull fake links out of thin air? The immediate instinct is often to blame the classic AI “hallucination” or “copyright restrictions.” But upon closer inspection, neither of these reasons holds up.

1. Why isn’t it just a “Technical Hallucination”?

Many might argue that this is simply the large language model’s instinct to stitch together a fake URL when it fails to call its search tool. The problem with this theory lies in our test with Google Search’s built-in AI Mode, which shares a similar, if not identical, underlying model architecture with Gemini.

If this was purely an inevitable technical flaw of the base model, why didn’t Google AI Mode hallucinate fake links? It honestly provided real (albeit lower-quality) results. Meanwhile, Gemini, theoretically a more advanced flagship standalone product, suffered from severe hallucinations. This suggests that the fabricated links aren’t necessarily a limitation of the model’s core intelligence, but rather a difference in how tool-calling mechanisms operate within specific product interfaces (like a chatbot UI).

2. Why isn’t it “Copyright Restrictions”?

Another common explanation is that AI chatbots are prohibited from directly providing (hotlinking) real images from third-party websites to avoid copyright risks.

This logic also has holes. As mentioned, Google AI Mode did provide real links in our test. Furthermore, traditional Google Search displays copyrighted third-party images on a massive scale every single day. If there were a strict, zero-tolerance copyright red line, neither of those systems would be able to operate as they do. Therefore, relying solely on copyright to explain Gemini’s specific failure here is insufficient.

💼 Our Hypothesis: The Tug-of-War Between Business and Product Positioning

Having ruled out pure underlying technical hallucinations and absolute copyright blockers, the most reasonable explanation we can arrive at points to deeper considerations regarding business logic and product positioning.

We hypothesize that this involves a “traffic tug-of-war” between traditional search and AI Chatbots.

Traditional Google Search is fundamentally a “traffic distribution” engine: you search for an image, click, redirect, and both the search engine and the content creator benefit. In contrast, the ultimate form of an AI chatbot like Gemini is “traffic termination” (Zero-click)—users get perfectly formatted text and images right inside the chat window, eliminating the need to click out to third-party sites.

If an AI chatbot’s real-time image search capabilities are made too seamless, it could objectively siphon traffic away from the traditional search business model. This could explain why the internal integration between the “conversational LLM” and “real-time search crawling” capabilities appears so conservative and restricted.

Conclusion & Discussion

At this stage, our testing suggests a clear workflow: if you need to accurately “fetch” real images from the internet, search-first tools like Perplexity are a better choice. Meanwhile, models like Gemini remain better suited for pure text generation and logical analysis.

Of course, the analysis above is simply our logical deduction and hypothesis based on the test results.

What do you think of these results? I welcome everyone to copy the prompt at the beginning of this article and test it on your favorite AI tools to see if you get different outcomes. If you have a more reasonable explanation for Gemini’s “negative 100-point” performance, or if you understand the technical nuances behind it, please share and discuss in the comments!

this is interesting article.