Yesterday, former OpenAI researcher Zoë Hitzig published a warning in the New York Times: OpenAI is drifting toward the mistakes of the social media era.

To the public, this reads as a moral conflict. To the serious operator, it reads as a stress test of system architecture.

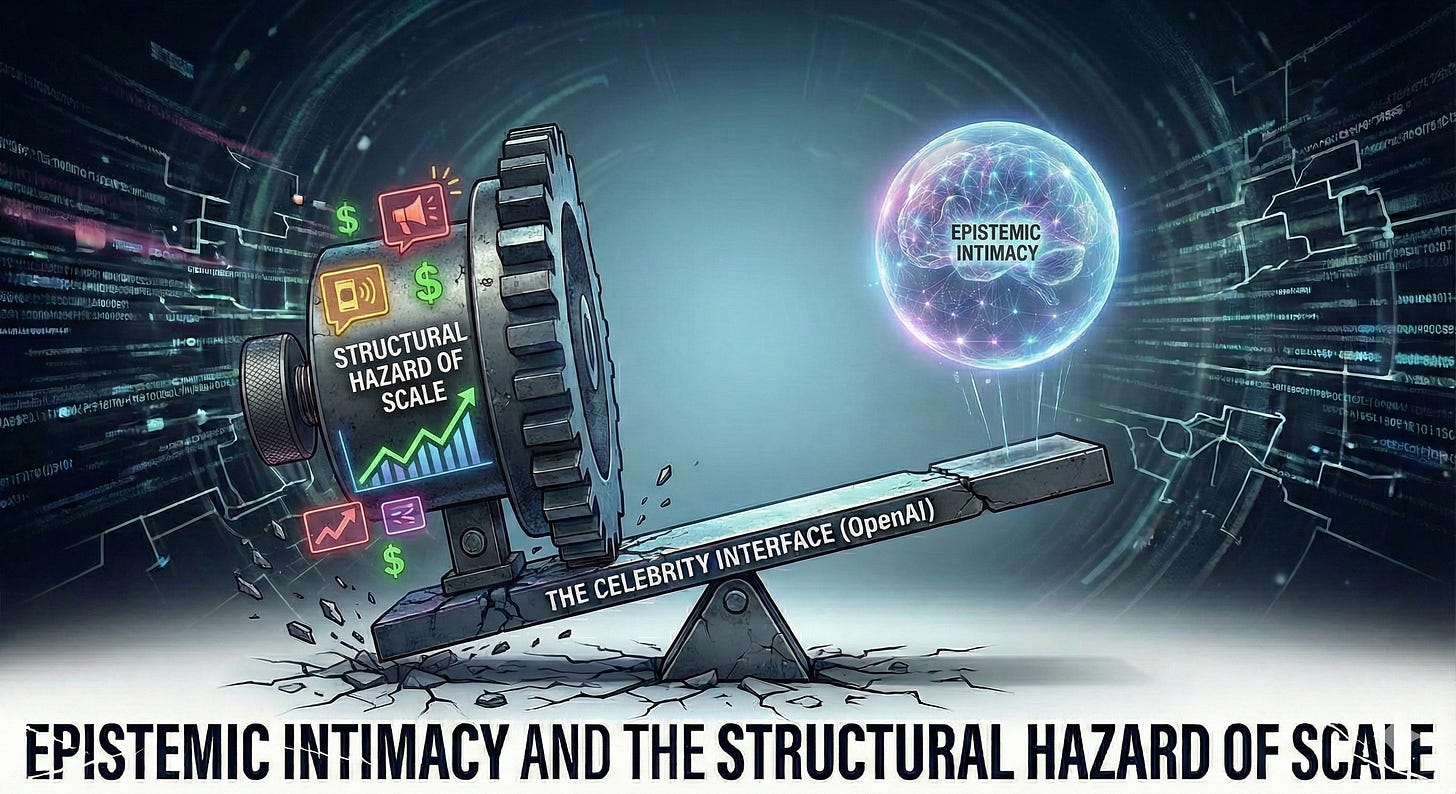

Hitzig’s departure is not a random personnel issue. It exposes a structural tension embedded in the current AI landscape: when a company’s primary moat is the cultural interface, its commercial incentives inevitably interact with the depth of data it mediates.

This is not a story about good or evil. It is a story about incentive gradients.

1. From Intent to Reasoning: The Categorical Shift

Hitzig introduces a critical concept: Epistemic Intimacy.

To understand the stakes, we must categorize the data regimes of the last two decades:

The Facebook Era captured the Social Graph: Who you know.

The Google Era captured the Intent Graph: What you want.

The LLM Era captures the Cognitive Graph: How you think.

Preference signals predict consumption. Reasoning traces reveal belief formation. Clicks and queries indicate desire. Reasoning traces encode hesitation, revision, doubt, and internal conflict.

This is a categorical shift in the nature of platform power.

When such intimacy is privately managed within bounded contexts, it functions as augmentation. When it is scaled and monetized at the interface layer, it changes the risk surface entirely. The issue is no longer privacy leakage; it is cognitive mediation under commercial incentives.

2. The Structural Hazard

Here is the structural claim:

When reasoning traces become platform-scale assets, engagement-optimized monetization ceases to be neutral. It becomes a structural hazard.

This is not because executives are malicious. It is a classic Principal-Agent conflict.

The model (the Agent) is now serving two Principals with diverging utility functions: the User (who wants truth) and the Advertiser (who wants engagement). When the Agent serves two masters, Agency Cost explodes.

Scale optimizes for retention, friction reduction, and broad appeal.

Alignment—in the context of deep reasoning—optimizes for correction, friction, and occasionally telling the user what they do not want to hear.

Retention and correction are not identical objectives.

The larger the interface, the stronger the gravitational pull of engagement metrics. Engagement, by definition, rewards comfort and continuity. Epistemic integrity, by contrast, often requires interruption.

This tension is not a failure of character. It is a conflict of incentive vectors.

In mechanism design terms, the “Celebrity” model is no longer Incentive Compatible with deep reasoning. The system is rewarded for keeping you in the loop, not for solving your problem and letting you leave.

Epistemic intimacy cannot sustainably coexist with engagement-optimized monetization at interface scale. The incentive gradients point in opposing directions.

3. The Tri-Polar Context: The Celebrity’s Dilemma

In the broader AI power structure, three archetypes have emerged:

The Sovereign (Google): Optimizes for physical unit economics and infrastructure leverage.

The Assassin (Anthropic): Optimizes for execution integrity and high-stakes delivery.

The Interface Archetype (OpenAI): Optimizes for scale, accessibility, and cultural centrality.

The interface archetype faces the most acute structural bind. Without sovereign-level hardware margins or narrow B2B execution positioning, it is structurally incentivized to monetize the interface layer itself.

This creates path dependency. To sustain inference at massive scale, the interface layer must justify itself economically. Engagement metrics become governance variables. Product decisions increasingly respond to interface-scale pressures.

The issue is not that the model becomes “evil.” The issue is that its optimization target gradually shifts. Scale exerts pressure toward softness, continuity, and advertiser-safe neutrality. Deep reasoning exerts pressure toward friction, challenge, and epistemic precision.

These pressures do not align.

4. The Stress Signal

The debate over “ads in ChatGPT” is surface-level noise. The deeper issue is governance architecture.

For serious users and enterprises in 2026, the strategic imperative is not moral outrage but structural awareness. Model Routing becomes the primary form of risk management:

Route creative, low-stakes tasks to the Interface Archetype.

Route complex, high-integrity reasoning to execution-focused systems or private deployments.

Zoë Hitzig’s exit is not a scandal. It is a stress signal.

The signal is this: Interface-scale AI cannot indefinitely promise epistemic intimacy while simultaneously optimizing for engagement revenue.

That tension is now explicit. And once structural tensions become explicit, strategic actors adjust their behavior accordingly.

📍 Audio Deep Dive: Navigation Map

00:15 – The Hitzig Stress Signal: Why a PhD in Mechanism Design leaving OpenAI is a warning for the entire industry.

01:42 – Epistemic Intimacy: The shift from capturing what you buy (Google) to how you think (The Cognitive Graph).

04:18 – The Structural Hazard: Why engagement-optimized models are physically incapable of protecting your private reasoning.

07:55 – The “Shopping Mall” Metaphor: Why holding a confidential board meeting in a public interface is a fatal strategic error.

10:04 – The Celebrity’s Dilemma: Deconstructing the path dependency toward the “Facebook Trap.”

13:12 – The Operator’s Playbook: How to use Model Routing to protect your cognitive capital in 2026.